Yes. Google can crawl, render, and index JavaScript content on the web.

But there are multiple caveats to this statement. Various typical uses for JavaScript can damage your website’s search visibility in many ways.

For a JavaScript-powered website to be successful on Google, it needs to be optimized. That’s what JavaScript SEO is for.

In this article, I will explain why you need to consider JavaScript SEO if your website is using JavaScript (and it most likely does!)

JavaScript SEO history in a nutshell

Before 2019, Google’s relationship with JavaScript was complicated.

In 2014, Google published a blog post discussing the fact that they started rendering JavaScript on web pages to better understand their content.

A year later, in 2015, Google stated that it is “generally able to render and understand your web pages like modern browsers” so it’s deprecating the obsolete AJAX crawling scheme.

But in fact, Google’s problems with processing JavaScript pages were far from solved.

Working with so many enterprise websites which, at that point, already heavily relied on JavaScript, Bartosz Góralewicz knew how difficult it was to rank high with a JavaScript-dependent website in those days. So he created his famous experiment to prove Google wasn’t ready to handle JavaScript just like HTML.

One key obstacle for Google was that for rendering and indexing purposes, Google used an old Chromium version- Chrome 41. It wasn’t able to render modern JavaScript features like a user’s browser would. This issue was the main reason for the rise of JavaScript SEO — a subset of technical SEO focused on making sure Google could process any content injected with JavaScript. The goal of JavaScript SEO was to let your website keep using JavaScript without sacrificing any search visibility.

Then, on May 7th, 2019, Google announced an update to its rendering service. Since then, Google was no longer using Chrome 41. Instead, Googlebot was going to be updated in sidestep with Chrome. This meant Google was finally able to render all pages more or less like the Chrome browser you might be using.

But was that the end for JavaScript SEO?

No.

It’s definitely a good thing that Google is about to support new JavaScript features, but for the average SEO or developer, it doesn’t change much.

There are still a lot of other issues we should be focusing on when it comes to JavaScript websites.

Don’t forget about the following issues:

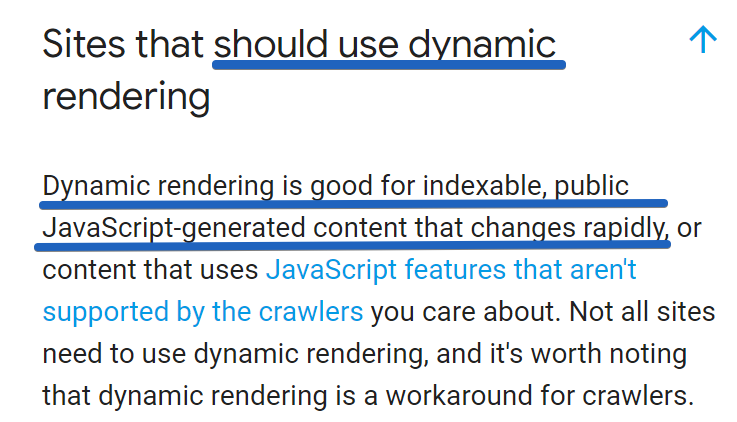

- You still have to wait until Google is able to render a JavaScript page (even within a few days or weeks). Google is aware of this, so in the case of dynamic, rapidly changing websites, they recommend serving Googlebot a static version (it’s called dynamic rendering).

- Other search engines still have major issues with rendering JavaScript at scale.

- Facebook, LinkedIn, and Twitter struggle with client-side rendered JavaScript websites. You risk these social media websites not being able to show the thumbnails and descriptions of your website, which can negatively affect traffic.

- No matter how good Google’s Web Rendering Service is, poor JavaScript SEO can have a negative impact on your success in the search engines.

- You should STILL check if Google can render and index your website.

Let’s look at each of these points in more detail.

1. Rendering Delays

Supported features are one thing, but rendering delays are another.

Why should you bother?

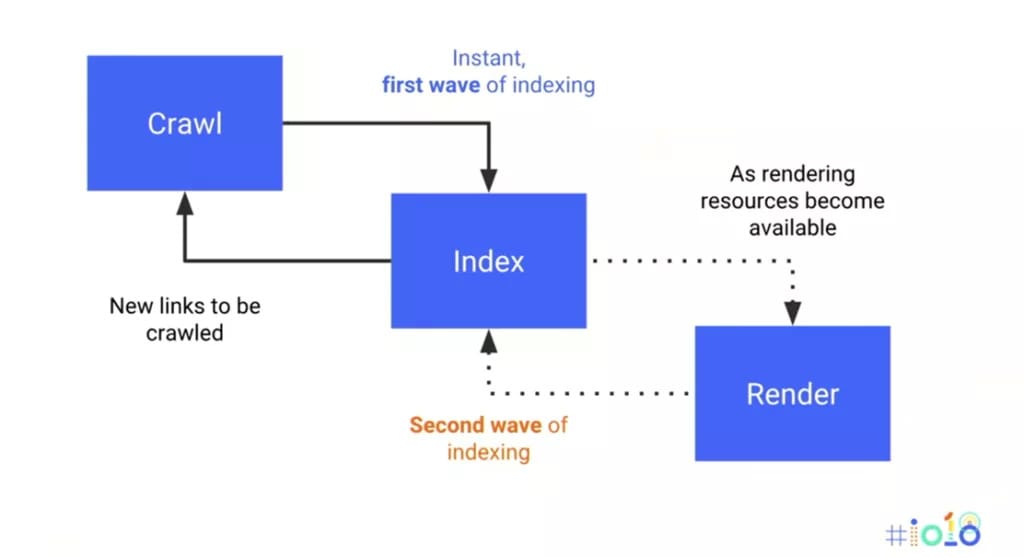

Google STILL has two waves of indexing.

- The first wave (HTML) is instant.

- However, the second wave: rendering is delayed. It happens when resources become available.

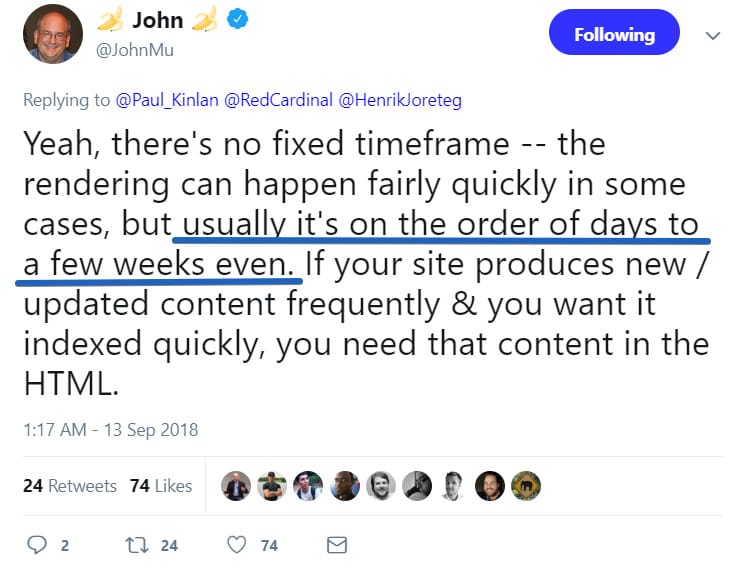

How long should you wait?

Google’s John Mueller discussed it on Twitter: “Usually it’s on the order of days to a few weeks even.”

I’ve seen some articles suggesting that when Google supports the most recent JS features, we’ll no longer need to do dynamic rendering (serving bots a static version) or hybrid rendering.

Well, as long as Google’s rendering JS is not instant, I CANNOT agree with this.

Imagine you have a car listing website with client-side rendered JavaScript.

This is a very dynamic branch; ads can quickly become outdated, in a matter of hours or days.

There’s the serious risk that Google will render and index your content AFTER it expires…

For rapidly changing content, Google recommends dynamic rendering.

2. Other Search Engines Struggle with JavaScript

If traffic from other search engines is important for you (and it should, as Bing has a “24 percent market share in the US” as of April 2018), you should be aware of the fact that these search engines struggle with crawling and indexing JavaScript websites. Period.

If you want to have a JS website that is successful in these search engines, the best way is to use dynamic rendering or hybrid rendering.

3. Facebook and Twitter Don’t Execute JavaScript

Similarly, Facebook, LinkedIn, and Twitter don’t execute JavaScript.

Let me show you what can happen if you don’t serve Open Graph schema/Twitter cards in the initial HTML:

As you can see, there is no hero image, nor description. It definitely doesn’t encourage users to click on such a link.

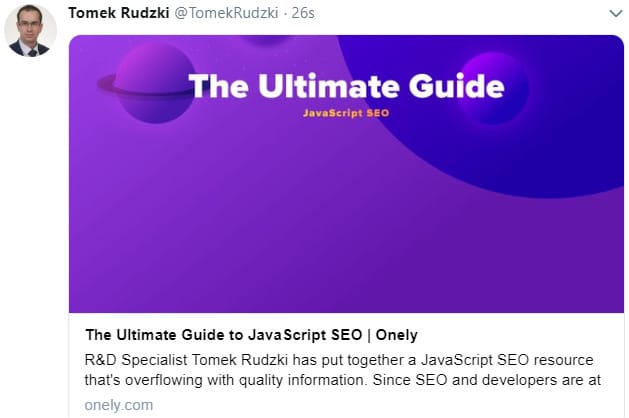

Please compare it to our website.

Do you see the difference?

| Bad | Good |

|

|

Traffic from social media websites is huge.

Imagine you are Wish.com and most of your revenue relies on social media platforms…

Seems like a big deal, doesn’t it?

4. Poor JavaScript SEO = Problems

This equation will always be true.

Even if Google makes rendering JS perfect on their end, poorly implemented JS SEO may still lead to a serious SEO disaster.

You should ensure that:

- Google can access the content hidden under tabs.

- Google can navigate through your internal links. In particular, make sure Google can access all your pagination pages (it’s easy to break it using an infinite scroll or JavaScript events).

- Important JS files aren’t blocked in robots.txt.

- JS doesn’t remove any content.

Of course, the above list is not exhaustive; however, it should help you fix the most common JS SEO issues.

5. You Should Still Check if Google Can Render and Index Your Website

Executing JS at scale is a really resource-consuming process.

It may sound a bit cliché, but there are over 130 trillion documents on the web. It’s a huge challenge even for giants like Google (and any other search engine for that matter) to crawl and render the entire internet.

Google’s timeouts

Based on my experience, if a server responds really slowly, Google may skip requesting your JS files and index the content as it is. After all, there are trillions of other documents waiting for Googlebot to visit.

This has strong implications. If your server/APIs respond slowly, you risk Google not indexing content generated by JS.

I expect the issue will still exist, no matter which JS features Google ends up supporting.

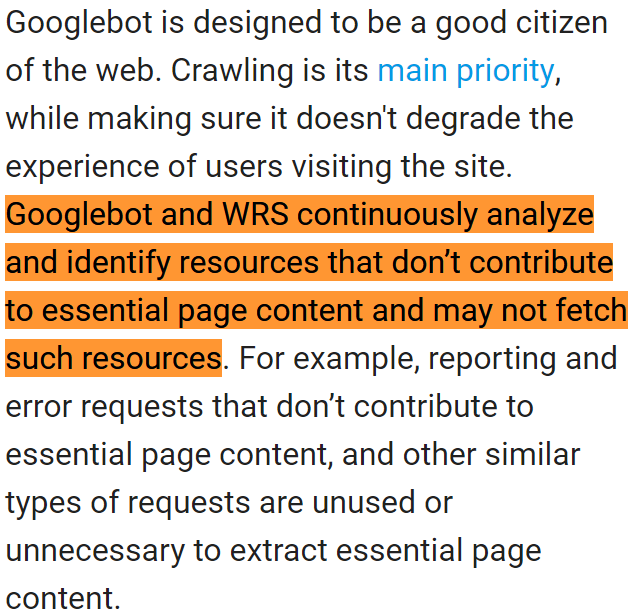

Google’s algorithms

There will still be a risk that Google may not fetch some resources just because its algorithms decided they don’t contribute to essential page content.

Here’s an excerpt from the official Google documentation:

It’s just an algorithm; it can still make mistakes, which can lead to a disastrous impact on your presence in Google Search.

Summary

I have written numerous times about how great JavaScript is. It’s the future of web development. And Google did a great job by updating its Web Rendering Service to support new JavaScript features.

But JavaScript SEO isn’t only about checking if Google can successfully render your website. It goes far beyond that.

Don’t sabotage your business. If you want to have a successful JavaScript website in Google, you should still perform additional steps to ensure Google is properly crawling and indexing your content, and ranking it high.