Search engines shouldn’t index all pages on your website.

Even if you think everything on your site is just fantastic, most websites have tons of pages that simply don’t belong in the search results. And if you let search engines index those pages, you may face negative consequences.

That’s why you need an indexing strategy for your site. Its key elements are:

- Deciding which pages you want search engines to index and using appropriate methods to maximize their chances of getting indexed,

- Deciding which pages shouldn’t be indexed and how to exclude them from search without limiting your potential search visibility.

Deciding which pages should or shouldn’t be indexed is difficult. You might find some guidelines and tips for particular pages, but you’ll often be on your own.

And choosing the appropriate methods to exclude those pages from search results requires even more consideration. Should you use the noindex tag or canonical tag, block the page in robots.txt, or use a permanent redirect?

This article will outline the decision-making process that will allow you to create a custom indexing strategy for your website.

While you may run into edge cases that don’t adhere to the logic I propose, the process underlined below will give you great results in the overwhelming majority of cases.

Why some pages shouldn’t be indexed

There are two main reasons why you shouldn’t want search engines to index all of your pages:

- It helps to optimize crawl budget,

- A lot of low-quality content that’s indexable might damage how search engines see your website.

Optimize your crawl budget

Search engines bots can crawl a limited number of pages on a given website. The Internet is infinitely large, and crawling everything would exceed the resources search engines have.

The amount of time and resources search engine bots spend on crawling your website is called crawl budget. If you waste the crawl budget on low-quality pages, there might not be enough for the most valuable ones that actually should be indexed.

By taking the time to decide which pages you want to be indexed, you can optimize your crawl budget and ensure search engine bots don’t waste their resources on less important pages.

If you want to learn more about crawl budget optimization, check out our Ultimate Guide to Crawl Budget.

Don’t let low-quality content damage your website

If search engines realize that you have a lot of low-quality content, they might decide to stop crawling your website as often.

Tomek Rudzki, in his Ultimate Guide to Indexing SEO, called this “collective responsibility.”

This shows how ranking, crawling, and indexing are interconnected.

Methods for controlling indexing

There are various methods you can use to control the indexing of your pages, including:

- Noindex robots meta tag,

- Disallow directive in robots.txt,

- Canonical tag,

- Permanent redirect,

- XML sitemap.

Each of the above methods has its own use and function.

Noindex robots meta tag

<meta name="robots" content="noindex">

If you add the above directive to your page’s HTML <head> section, search engine bots will understand that they shouldn’t index it. It will prevent the page from appearing on the search engines’ results page.

You should use this tag if you don’t want the page to be indexed, but you still want search engine bots to crawl your page and, e.g., follow the links on that page.

Disallow directive in robots.txt

User-agent: * Disallow: /example/page.html

The disallow directive in the robots.txt file allows you to block search engines’ access to the page. If a search engine bot respects the directive, it won’t crawl the disallowed pages, and consequently, they won’t be indexed.

Since the disallow directive restricts crawling, this method can help you save your crawl budget.

Note: The disallow directive is not a proper way of blocking access to your sensitive pages. Malicious bots ignore the robots.txt file and can still access the content. If you want to make sure some pages are not accessible to all bots, it’s better to block them with a password.

Canonical tag

<link rel="canonical" href="https://www.example.com/page.html">

A canonical tag is an HTML element that tells search engines which duplicate URLs are the original ones.

Using the canonical tag, you specify exactly which version of a page you want to be indexed and appear in the search results. Without the canonical tag, you have no control over which version of your page gets indexed.

Search engine bots still need to crawl the page to discover the canonical tag, so using it won’t help you save your crawl budget.

Remember that Google can ignore improperly created canonical tags. If Google ignores your canonical tag, you can spot it thanks to the “Duplicate, Google chose different canonical than user” status in GSC.

Permanent redirect

The 301 redirect is an HTTP response code that indicates a permanent redirect. It specifies that the requested page has a new location, and the old page was removed from the server.

When you use a 301 redirect, users and search engine bots won’t access the old URL. Instead, traffic and ranking signals will be redirected to the new page. You’ll see the “Page with redirect” status in your Google Search Console.

Using the 301 redirect is a good method of saving the crawl budget. You’re decreasing the number of pages available on your website, so search engine bots have less content to crawl.

However, beware of creating redirect chains or loops as they may actually contribute to crawl budget issues and result in the “Redirect error” status in Google Search Console.

Remember that you should redirect only to a related page. Redirecting to an unrelated page can confuse users. Additionally, search engines bots might not follow the redirect and treat the page as a soft 404.

XML Sitemaps

An XML sitemap is a text file that lists the URLs you want search engines to index. Its purpose is to help search engine bots easily find the pages you care about.

A well-optimized sitemap not only directs search engines to your valuable pages but also helps you save your crawl budget. Without it, the bots need to crawl the whole site to discover your valuable content.

That’s why sitemaps should list only the indexable URLs on your website. This means that the pages you put in the sitemap should be:

- Canonical,

- Not blocked by the noindex robots meta tag,

- Not blocked by the disallow directive in robots.txt, and

- Responding with 200 status code.

You can learn more about optimizing sitemaps in our Ultimate Guide to XML Sitemaps.

How to decide which pages should or shouldn’t be indexed

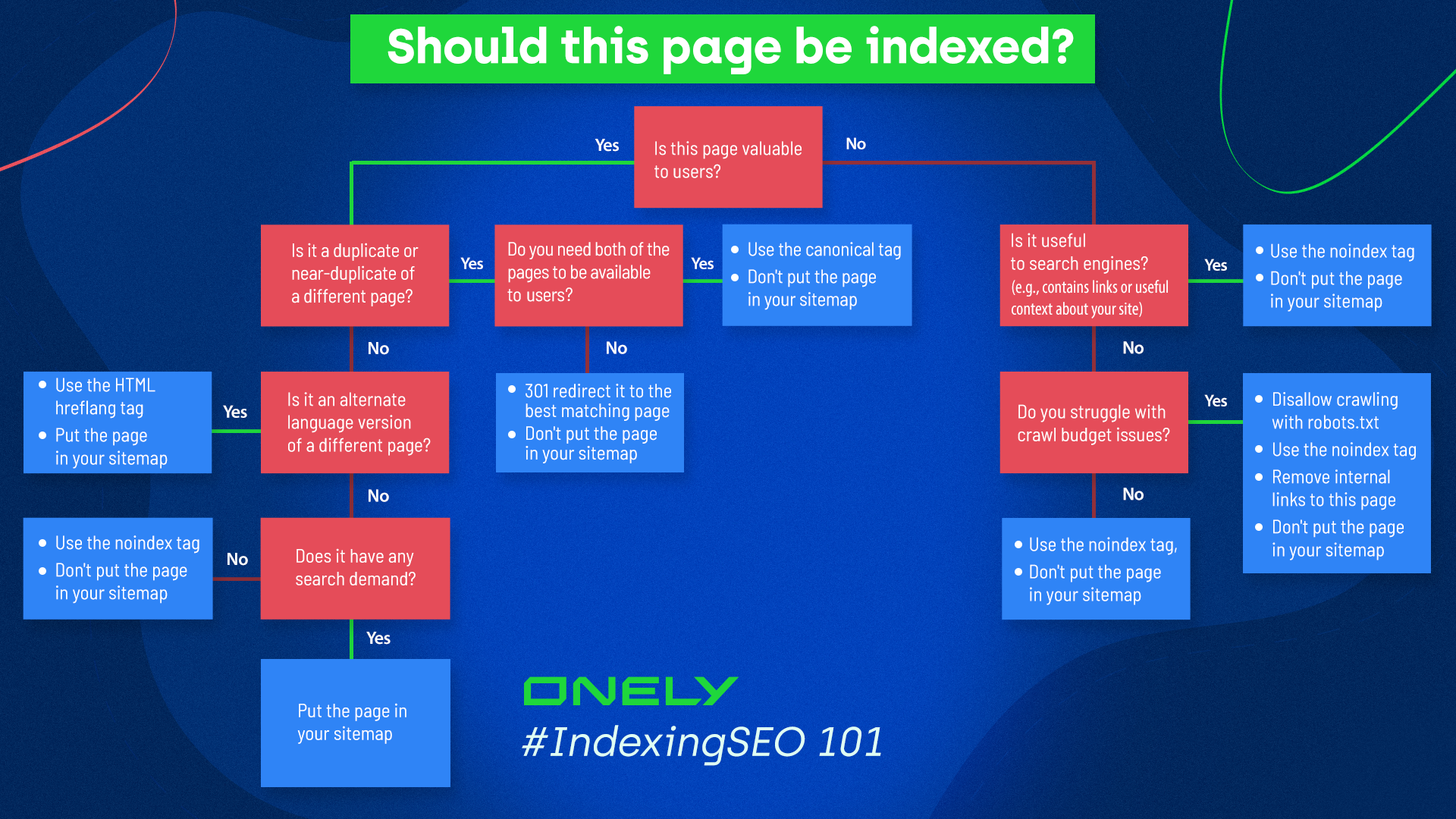

To help you decide which pages should or shouldn’t be indexed, I created a decision tree with all the essential questions you need to answer.

As you can see above, the fundamental question is: is this page valuable to anyone?

There are three possible answers to that question:

- The page is valuable to search engine users (and search engines),

- The page is valuable to search engines,

- The page is not valuable to anyone.

The bottom line is that only pages valuable to users should be indexed. However, even in that category, there are types of pages that shouldn’t be indexed.

Let’s break it down.

Pages valuable to users

A page is valuable to search engine users if it provides an answer to their search or allows them to navigate to the answer.

In most cases, if a page is valuable to users, it should be indexed. However, there still might be a situation when a page is valuable to users but shouldn’t be indexed.

Pages valuable to users that should be indexed

A page should be indexed if:

- It provides high quality, unique content that brings traffic,

- It’s an alternate language version of a different high-quality page (if applicable).

High-quality, unique content

High-quality, unique pages that bring traffic to your site should definitely make it to your sitemap. Ensure they aren’t blocked by robots.txt, and they don’t have the noindex meta robots tag.

Pay particular attention to the most valuable pages for your business. They are the ones that usually bring the most conversion. Pages like:

- Home page,

- About us and contact pages,

- Pages with information about the service you provide,

- Blog articles showing your expertise,

- Pages with specific items (like eCommerce products),

should always be indexable, and you should regularly monitor their indexing.

Alternate language version

Translated content is not treated as duplicate by search engines. In fact, search engines want to know if you have multiple language versions available to present the most suitable version to users in different countries.

If you have an alternate language version of a page, you should specify it with a hreflang tag and put the page in your sitemap.

You can specify the hreflang tags in your sitemap, HTML, or both. Hreflang tags used in sitemaps are perfectly fine from a search engine perspective. However, they might be difficult to verify with SEO tools or browser plugins. For that reason, the recommended way of adding the tag is in HTML code and the sitemap, or only in the HTML code.

Remember that each page needs to specify all language versions, including its own language.

Pages valuable to users that shouldn’t be indexed

In some situations, pages can be valuable to users, but they still shouldn’t be indexed. The situations include:

- Duplicate or near-duplicate content,

- Pages with no search demand.

Duplicate or near-duplicate of a different page

Search engine bots might consider a page duplicate or near-duplicate if:

- Two or more different URLs lead to the same page,

- Two different pages have very similar content.

One of the most common examples of duplicate content is filtered category pages on eCommerce sites. Users can apply filters to narrow down the products and find what they are looking for quicker. Unfortunately, each applied filter might save the parameters in the URL, creating multiple URLs leading to the same page.

For example, store.com/dresses/item and store.com/dresses/item?color=yellow might point to the same content.

Other reasons for duplicate or near-duplicate content involve:

- Having different URLs for mobile and desktop versions,

- Having a print version of your website, or

- Creating duplicate content by mistake.

The risks of having indexable duplicate content include:

- Having no control over which version might appear in search results. For example, if you have print and regular versions available, search engines might show the print version in search.

- Dividing the ranking signals between multiple URLs.

- Drastically increasing the number of URLs search engines need to crawl.

- Lowering your position in SERPs if search engines decide you want to manipulate the ranking (a rare consequence).

To avoid the negative consequences of having duplicate content, you should aim to consolidate it. The main ways to do it include canonical tags and 301 redirects.

Canonical tags are the best option if you need all pages to be available for users.

An example of duplicate content that should stay available on your site is one that improves User Experience. For example, when users filter the products on an eCommerce site, redirecting them might be confusing for various reasons, like a sudden change of breadcrumbs.

Additionally, it might be necessary to have duplicate content on your site when you have different versions for different devices.

With a 301 redirect, only one of the pages stays available on your site. The rest will be automatically redirected.

A 301 redirect might be helpful when, for example, you have two very similar blog posts and decide only one should remain on your site. The 301 status code will redirect traffic and ranking signals to your chosen article. It’s an excellent method of optimizing your crawl budget, but you can use it only when you want to remove the duplicate page.

Remember to make changes in your sitemap whenever you use permanent redirects. You should only put pages that respond with 200 status codes in your sitemap. Therefore, if you’re using the 301 redirect to consolidate content, only the version that stays on your website should remain in the sitemap.

Pages with no search demand

You might have good content on your site that doesn’t have any search demand. In other words, nobody is looking for it. This might happen when you’re writing about a niche hobby or have pages with, e.g., a “thank you” note for your users.

These pages might bring no traffic or conversions. Perhaps you want to leave them because they complement users’ journeys, but you don’t want them to be the first thing users see on the search result.

If you don’t think users should see a specific page in search results, or the page doesn’t bring any traffic, there’s no need to keep it indexed. This way, search engine bots can focus on the pages that actually get you traffic.

To block the indexing of a page with no search demand, use the noindex meta robots tag. Bots won’t index it, but they will still crawl it and follow links on that page, giving them more context about your website.

Pages valuable only to search engines

Not all pages are meant to help users. Some of them help search engines learn about your website and discover links.

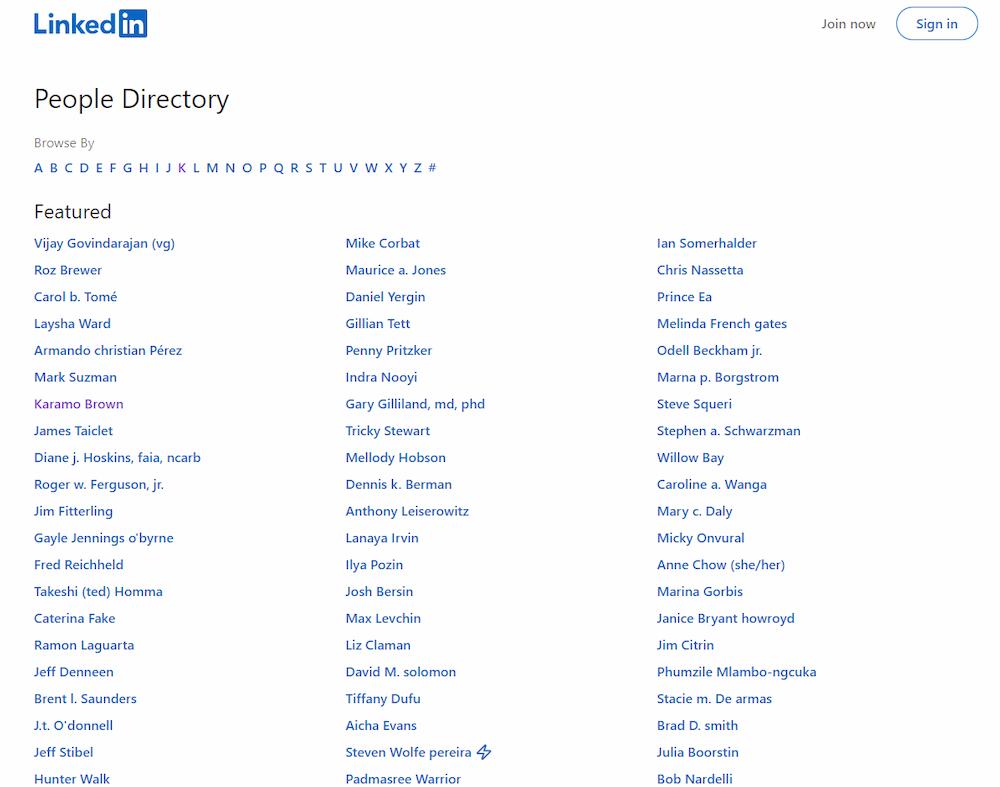

Take a look at this LinkedIn page:

It lists all users’ profiles, making it easy for search engines to find all links.

On the one hand, pages like these might confuse users and discourage them from staying on the site. They are not valuable to them, so they shouldn’t appear in search results and shouldn’t be indexed.

On the other hand, they are useful for search engines – they boost your internal linking.

That’s why the best solution is to implement noindex meta robots tags, leave these pages out of your sitemap, and allow their crawling in robots.txt. They won’t be indexed, but bots will crawl them.

Pages not valuable to anyone

Some pages are not valuable to users or search engines.

Some of them are required to exist on your site by law, e.g., privacy policy, but, let’s be honest – nobody is searching for this type of content. Of course, you can’t remove them, but there’s no need for them to be indexed because nobody wants to find them. In some cases, they might outrank more valuable content and “steal” traffic.

Pages with no value also include thin, low-quality content. You should pay particular attention to them, as they can hurt the way users and search engines perceive your site’s overall quality. Refer to the Low-quality content can damage your website chapter for more information.

Most importantly, you need to ensure the pages with no value have the noindex meta robots tag. If you don’t block their indexing, they might harm your rankings and discourage users from visiting your website.

Additionally, if you want to optimize your crawl budget, block these pages in the robots.txt file and remove internal links pointing to them. This will help you save the crawl budget for more valuable pages.

Wrapping up

Knowing which of your pages should and shouldn’t be indexed and communicating it to search engine bots is crucial in creating a sound indexing strategy.

It will maximize the chances of your website being crawled and indexed properly and ensure your users can find all your valuable content in search results.

Here are the key takeaways you need to keep in mind while creating your indexing strategy:

- When deciding whether a page should be indexed, ask yourself if it has unique content with value for users. Unique, valuable pages shouldn’t be blocked from being indexed by noindex meta robots tags or blocked from being crawled using the robots.txt disallow directives.

- If your low-quality content is indexable, it can negatively affect your ranking and put your valuable pages at risk of not being indexed.

- If you have duplicate or near-duplicate content on your site, you should consolidate it with a canonical tag or 301 redirect.

- If a page has no search demand, it doesn’t have to be indexed – use the noindex in the meta robots tag.

- Pages that contain content or links valuable only to search engines should be blocked from being indexed using the noindex meta robots tag, but don’t block them from being crawled in robots.txt.

- If neither users nor search engines benefit from visiting a given page, it should be set to noindex in the meta robots tag.

- If you have multiple, alternate language versions of the same page, keep them indexable. Use the hreflang tag to help search engines understand how these pages are related.

Hi! I’m Bartosz, founder and Head of Innovation @ Onely. Thank you for trusting us with your valuable time and I hope that you found the answers to your questions in this blogpost.

In case you are still wondering how to exactly move forward with your organic growth – check out our services page and schedule a free discovery call where we will do all the heavylifting for you.

Hope to talk to you soon!