Have you noticed a change in your website’s indexing last week?

Last week, Google released the October 2022 spam update. A weekend prior, there was likely an additional, unannounced update.

What was it about? I don’t know, but I know multiple URLs on our site were deindexed, and we’re not alone.

Using ZipTie.dev, we can clearly see which URLs aren’t getting traffic anytime soon.

Multiple other sites we’re tracking also lost a large portion of their indexed pages. In the case of this particular update, it looks like Google deindexed pages that weren’t getting much traffic even though they used to be indexed.

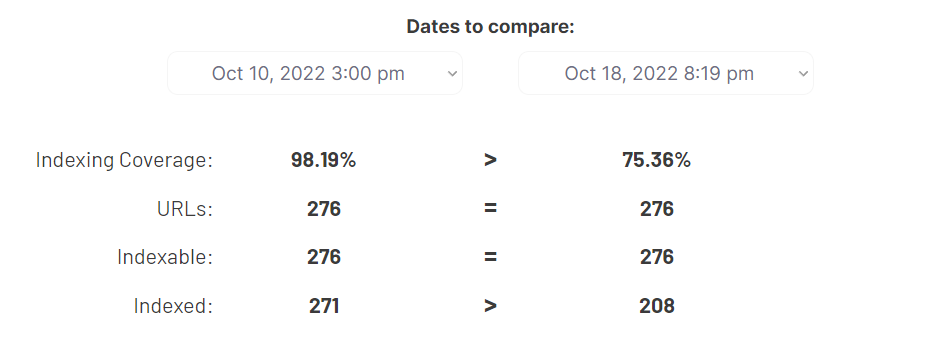

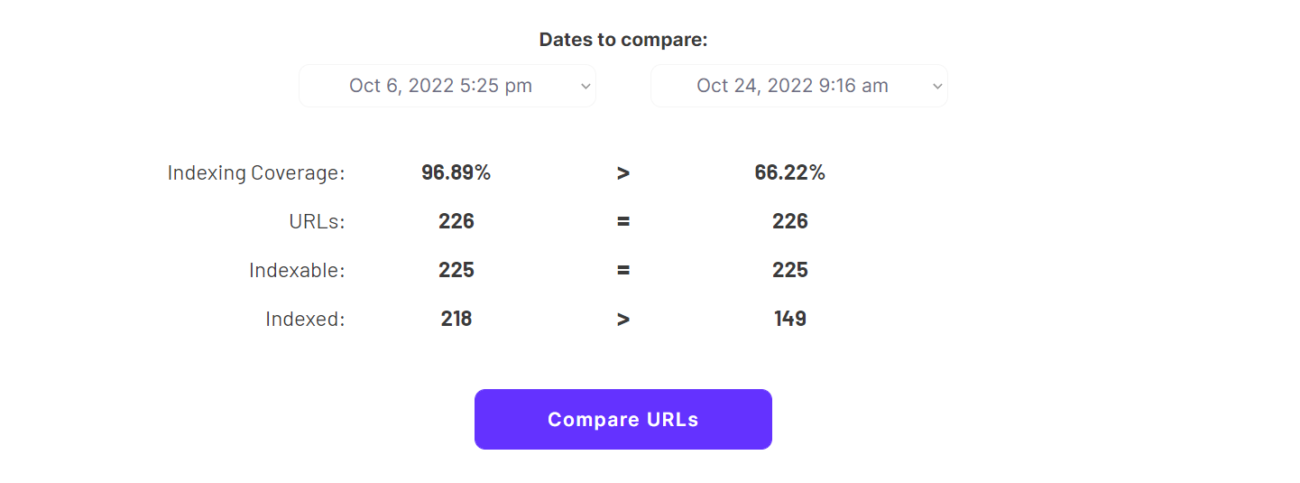

Here’s how our Index Coverage changed:

It went from 98% of indexable URLs being indexed to just 75%.

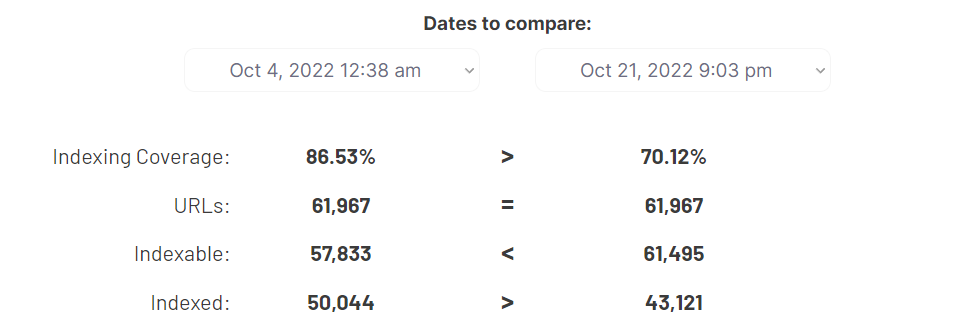

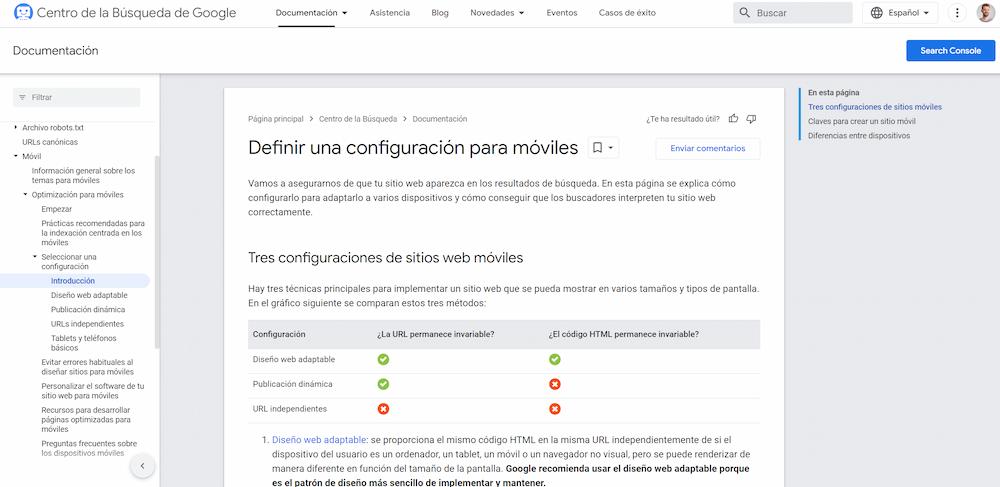

Like I already said, we’re not alone. Developers.google.com had a significant drop in indexed URLs even though the number of indexable URLs on that website increased in the meantime. It went from 86% to just 70% of indexed URLs.

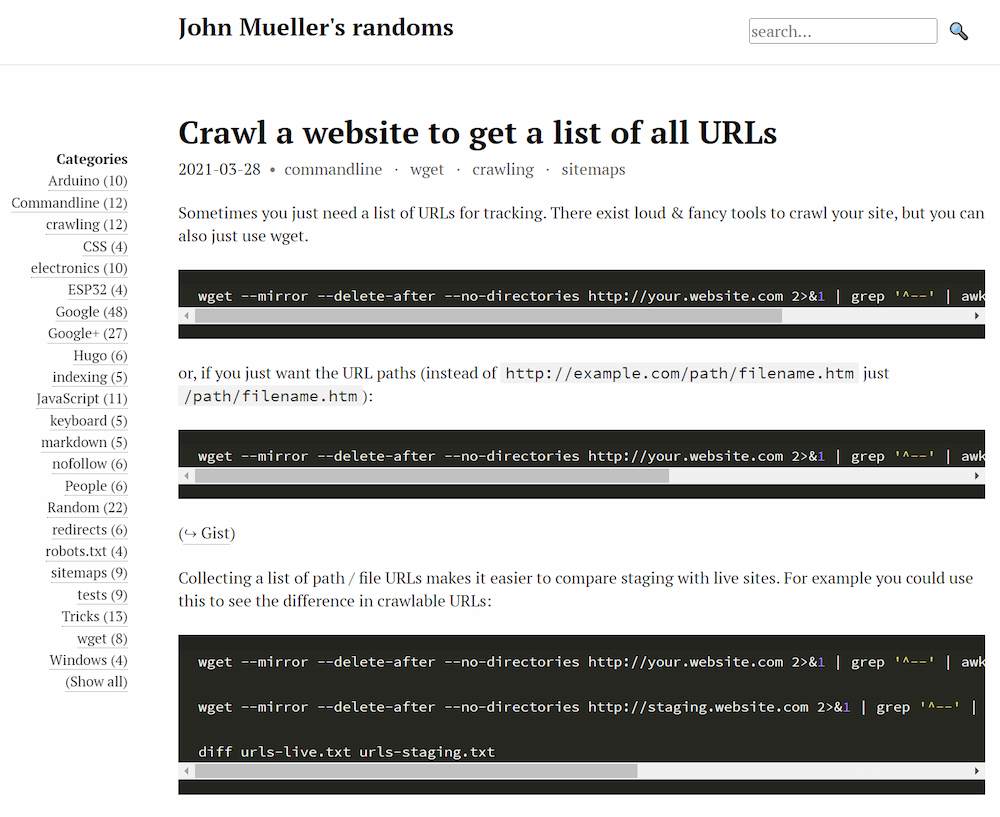

John Mueller’s website (johnmu.com) got heavily deindexed too.

Which pages got deindexed?

With johnmu.com, over 60 posts were deindexed. Many of them were old, and in fact, some of them were super old. But many of them were fresh and useful. I found one deindexed post that I remember reading because it was so useful. It’s a post about using the wget command line tool for crawling.

In the case of developers.google.com, the issue is a bit more complex. Many of the URLs that Google deindexed are external resources. But I also found some valuable pages that must be getting some traffic. For example, Google deindexed its own guide to Mobile SEO in its Chinese and Spanish versions.

In our case, it was a collection of thin-content pages about company-related events. We never expected these pages to rank, but it’s been a way for us to keep track of what’s been happening in the company.

Google didn’t like these pages. And this whole collection of posts got deindexed overnight. After I noticed it, I decided to 404 all those pages.

If you want more examples, or if you want to check the coverage of a specific website, let me know on Twitter.

Are core updates about indexing?

For a while now, we’ve been noticing that these core updates are, at least to a degree, about the size of Google’s index.

SEOs typically focus on search visibility – how many keywords a given website is ranking for, and how many people search for those keywords. ‘Core Update losers’ get noticed because their visibility drastically drops overnight. And then people analyze the quality factors that may be to blame.

But why exactly does their visibility drop? We usually try to analyze those sites without access to their GSC accounts. Meaning we don’t have any data about indexing.

Especially with large websites (and they are the most interesting), this makes it impossible to know if their visibility dropped because their rankings dropped, or because their pages were removed from the index altogether.

Or I should say, it was impossible, until recently. Last month, we officially launched ZipTie, our indexing intelligence platform. It lets you analyze any website and see its indexing statistics.

We’ve been using ZipTie to analyze popular websites for months now. And we now have data to show that many of Google’s core updates are actually about indexing.

What I mean is that if a website’s visibility gets hurt during a core update, it’s often because some of its pages get dropped from the index.

And it makes sense from Google’s perspective. If some pages are indexable but Google thinks they shouldn’t be – why keep them in the index? Indexing is resource-consuming and keeping a page indexed for a while is even more so. Every page needs regular recrawling to make sure Google knows its contents.

This is exactly what seems to have happened over the weekend for our site.

I say it’s a good thing. We might have gotten 2 organic clicks to these posts over the years. If Google doesn’t want them indexed, so be it.

But what are the consequences of this for large websites? And for the whole SEO industry?

We have way more data to show how the indexing of large websites fluctuates as core updates are released. It turns out that for years, we were likely looking at the wrong metrics when analyzing core updates.

Next time you get hit with a core update, check if any important pages were deindexed before you do anything else. It might be your most important task to get Google to index those again. This time, I think Google focused on low-traffic pages, but that’s not necessarily always the case.

You can contact us if you need any help recovering from a core update.