If you build or manage a business website, your normal day at work probably involves monitoring the website’s performance, looking at various KPIs, and comparing how it’s doing compared to the competition.

These days, the chances are great that your website relies on JavaScript in one way or another. If you’ve ever struggled to pinpoint why you don’t get as much search traffic as you’d expect, you should read this.

In the event that your website is using JavaScript, let me introduce you to the concept of the vicious cycle of a low crawl budget.

1. You update your website with new products

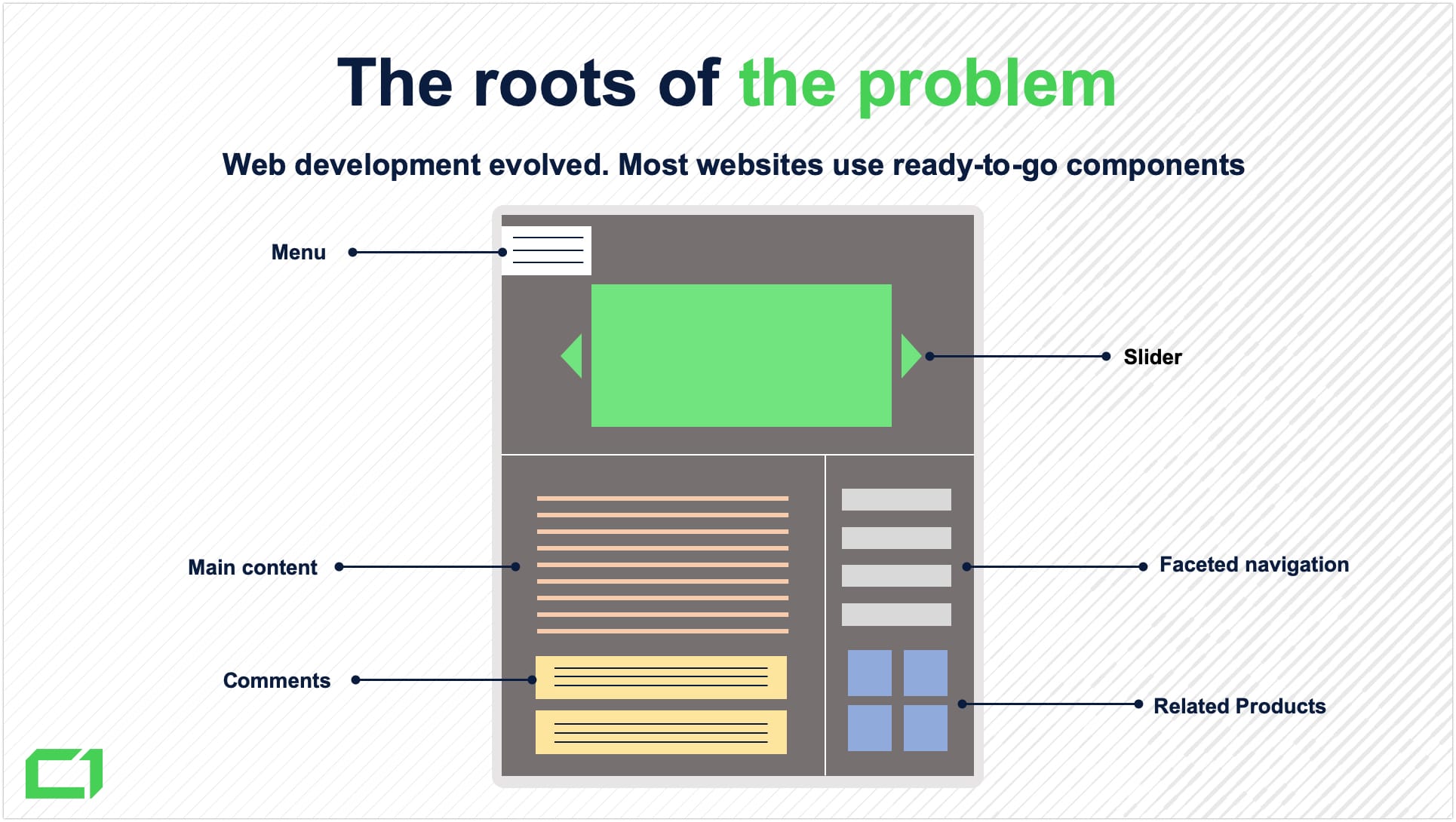

Every day, you probably add many new pages to your website. Whether these are product pages, articles, or news, they are probably fairly similar:

- There’s the main body containing text, photos, and maybe a video,

- There are links to related pages,

- There is a comment or review section.

From a technical standpoint, there’s a good chance that some of these elements will be injected into the page by JavaScript. Although it’s a bit heavy, JavaScript allows for creativity and functionality that is virtually impossible to achieve using only HTML and CSS.

After your new pages are published, all you have to do is eagerly wait for Google to discover them and start showing them to potential customers.

2. Google crawls your website without seeing all the links

This is where things may go downhill.

Google uses complex software known as Googlebot. It’s a system of algorithms that discover new links on the internet and follow them to crawl the pages that they point to. Once Googlebot knows what the page is about, the page is sent to Google’s index.

This sounds fairly simple, right? But you need to not only factor in the millions of new links that need to be followed every day. The pages which have already been indexed also need to be recrawled in the event that any content is updated.

Due to limited resources, the search engine sets priorities for its crawling algorithms and must determine the number of pages to visit for every domain.

Google defines these boundaries as the crawl budget – the number of URLs Googlebot can and wants to crawl.

Limited resources mean that Googlebot prefers first to discover content that is easily accessible.

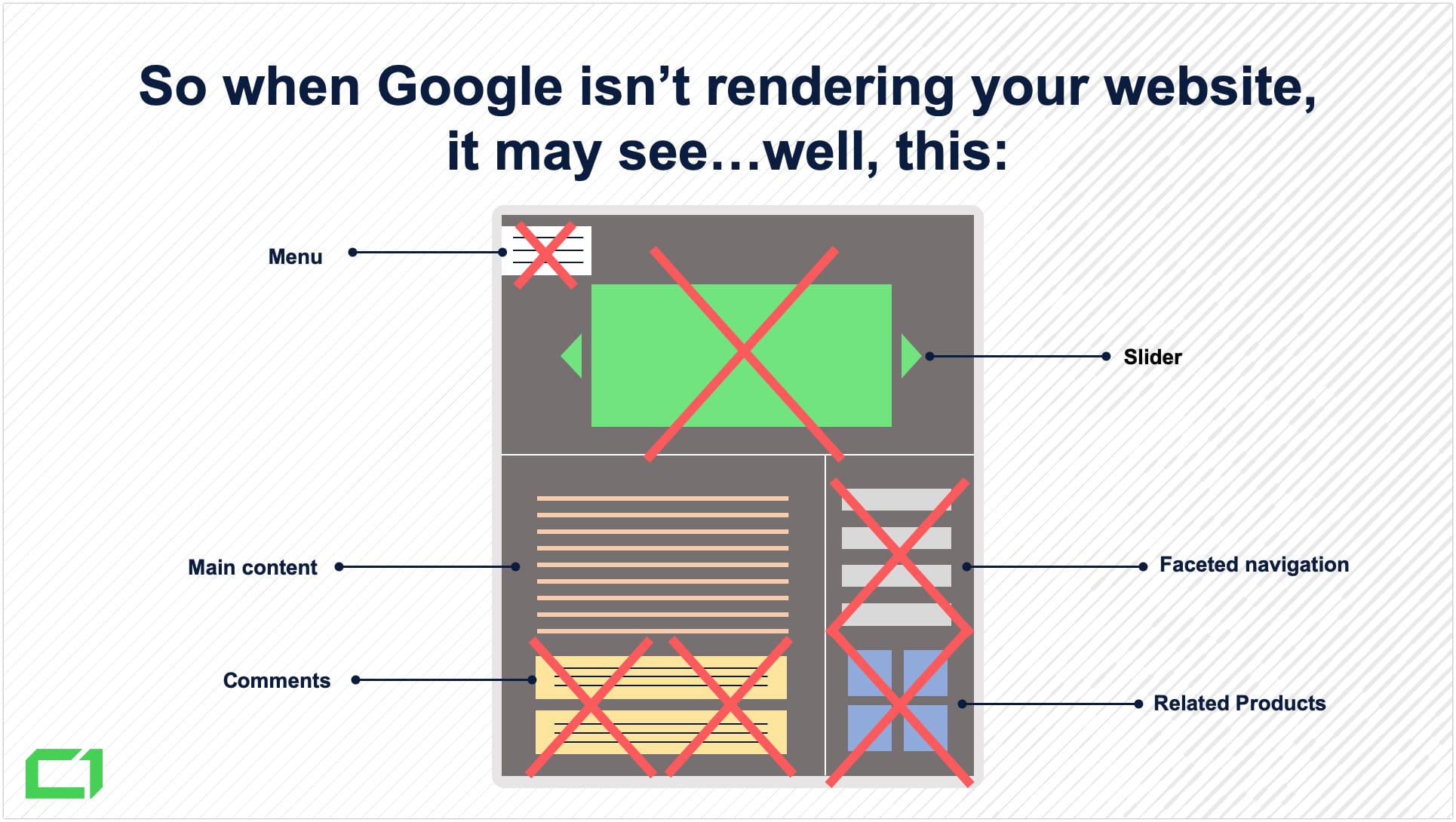

As I mentioned before, JavaScript is heavier than its two counterparts – it takes more computing power to render it. A plain HTML file is very easy to index, but when JavaScript comes into the picture, Googlebot has to spend more resources to see the page’s contents.

Because of the restrictions of the crawl budget, you shouldn’t expect your newly added pages to be fully rendered by Googlebot.

Whatever it finds in the HTML code will be indexed fairly seamlessly, but the JavaScript elements may remain undiscovered for months, waiting until Googlebot has enough resources to render them.

3. Googlebot only crawls part of the domain without finding products (valuable content)

What if Googlebot can’t see the main body of the page because it relies on JavaScript that wasn’t rendered?

If it’s a product page, Googlebot doesn’t discover the product description or the photos; and if it’s an article, it can’t see the entire article (despite what the image says below!).

Or what if Googlebot doesn’t discover the links to related pages that are injected with JavaScript? The architecture of the entire domain is compromised. In this case, Google may assume that the pages that aren’t well linked to internally are less important, and won’t show them as much in the search results.

To summarize, when Googlebot can’t discover the most important parts of your website, it will spend the entire crawl budget crawling through low-quality content instead. If that happens, you shouldn’t be surprised that Google won’t recognize the value you provide for the users.

4. Google is confused and the crawler budget falls

Looking at it from Google’s perspective, Googlebot wasted time crawling through your seemingly incomplete pages instead of the worthy content that it was looking for. Next time, Googlebot will remember that there’s little to gain from crawling that domain and it may not have enough interest to render the JavaScript and see the product description that actually makes the page valuable. Typically, this will manifest with pages that are reported as Discovered — currently not indexed.

5. The crawl budget is too low to render JS

This is where we come full circle.

Because Googlebot didn’t fully render your pages the first time it visited them, it didn’t discover all the valuable JavaScript-powered content. Every figurative penny of your crawl budget was spent on crawling thin content. Because of that, Google assumed that the overall quality of your website doesn’t meet expectations.

As a consequence, your crawl budget is further lowered.

Next time Googlebot surely won’t have enough interest to render the JavaScript and see the elements of your pages that actually make them valuable.

How can you break the cycle?

Here’s another cycle to consider:

- With a low crawl budget, your website may become more difficult to crawl.

- Entire pages from your website may not get discovered and indexed for weeks or even longer.

- Your customers may never discover your new content in the search results.

- You may lose money.

From the technical SEO experts perspective, it’s painful to watch businesses continue to spend money on online advertising while their websites aren’t even fully indexed in Google.

If you think your website is trapped in a vicious cycle, Onely can provide your team with JavaScript SEO solutions your website needs to break free.

And, for further reading on how Google sees your JavaScript-based content, take a look at our ultimate guide to JavaScript SEO.