Note: A lot has changed since I wrote this article in 2014. Before reading this case study, I highly recommend my SearchEngineLand guest post – Is CTR a ranking factor?

Something was seriously wrong with two of my clients’ sites. They weren’t suffering from Panda or Penguin issues. Their sites were healthier than most of their competitors. Still, I spent 2 months fixing every issue I could think of, and still no rankings had changed. Something else, something new was suppressing their search visibility.

I explored everything I could. Their backlinks were clean, their citations were accurate and their on-page elements were also properly optimized.

What was it? I had to get to the bottom of it.

Looking Beyond Backlinks

I was forced to look beyond their link profile for the answer. When I did, I found two unusual things:

After auditing everything I could think of, I started to crawl their website hourly. Turns out this was a smart move because it helped me find the issue.

Sabotaged Servers (but not DDoS)

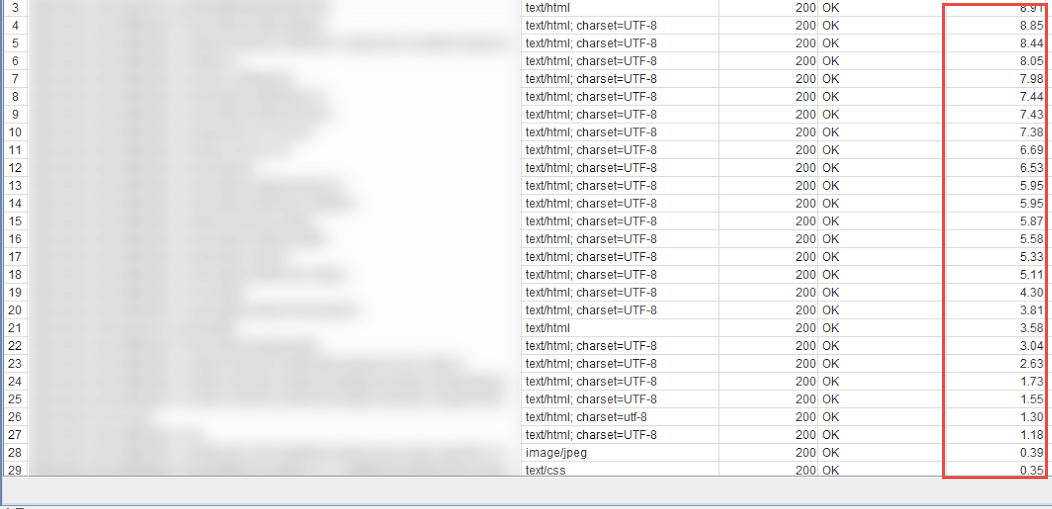

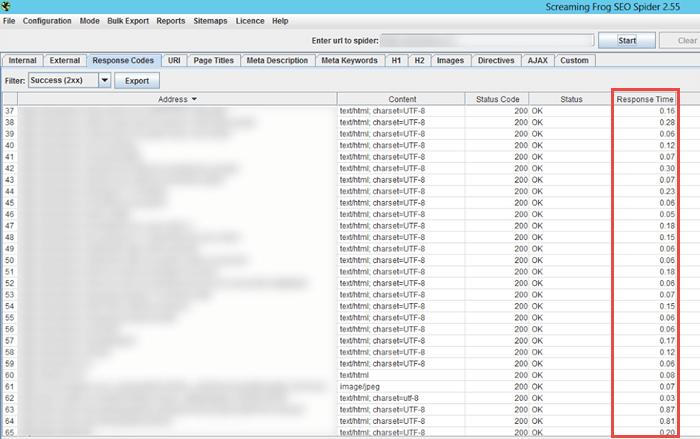

One of the sites showed heavy server load at early morning hours caused by heavy crawling.

Competitors (I assume) were slowing down a client’s server by heavy crawling with different IPs and loading large images (5+ MB) to high-traffic websites, etc. The attacks typically occurred between ~2 am and 5 am. I was typically only crawling the website between 9 am and 8 PM, therefore I never saw any performance issues.

Their server was crawling:

It should have looked fast, like this:

Fake terrible user experience

The second site suffered click-through attacks (from Google SERPs) on main keywords and brand-related terms. It looked like a CTR bot was programmed to search their terms and click on results except for their site. It created a false SERP bounce rate, diluting brand signals and letting competitors take their position in SERPs.

Diagnosing those issues took a while, but once I managed to find a solution using a CTR bot, the sites started slowly recovering after 3-4 weeks (probably with a Panda roll-out). Both recoveries are still in progress.

This Shouldn’t Be Happening

It got me thinking. In theory those issues shouldn’t have been possible because:

- Neither site had a negative SEO attack related to link building.

- Both sites were technically sound.

- Both sites were better than competitors and had unique value to customers.

- Plus, both sites spent a lot of time (some of that with me) on technical SEO audits, content audits, content rewrites, title rewrites, etc. One of the sites (ecommerce) re-wrote 40% of the descriptions (all are duplicate in that niche).

- Both websites were relatively new: One was about 1.5 years old, and the other was about 3 years old. (They were not established brands yet, so it may have been easier to dilute their signals.)

So…what happened? Was it just a fluke? Or was it negative SEO?

I shared this case with some of my friends in the SEO community and I got mixed responses. A few saw signals that confirmed my suspicions, but others rejected the idea “Matt Cutts” style, saying such an attack was impossible.

Was it possible to attack a site without backlinks? I had to know. To find out, I experimented on my blog.

Testing Negative SEO On Myself

My blog is quite new (~12 months old). I have never done any link building, but it still had many positive signals and natural links. I was thrilled with how well my content was ranking. Could I replicate my clients’ issues and “kill” that with user experience signals?

The Victim

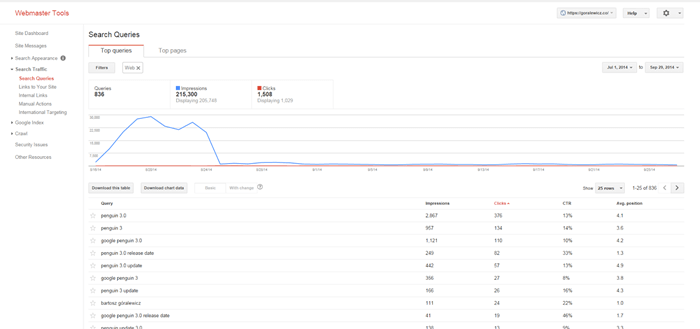

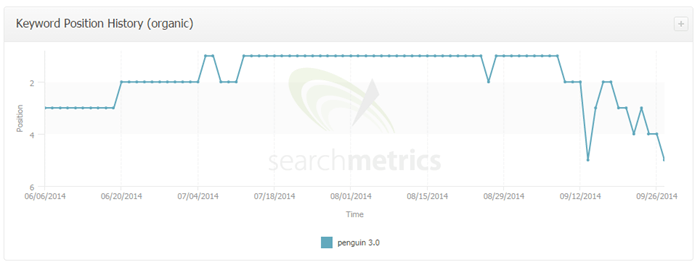

I decided to target one of my best keywords: “Penguin 3.0.” Since April, my blog consistently ranked between the #1 and #2 spot in SERPs for this query. I was the first one to blog about it and got a lot of natural links to the page. The links were high quality and I enjoyed stable rankings.

The Tool:

I created a bot to search for “penguin 3.0” and click on a random website. There is only one exception: it was programmed not to click on http://goralewicz.co/, thereby massively lowering my Click Through Rate.

The Verdict:

On August 16, 2014, I sent a load of traffic to this keyword from all around the world, clicking everything except my website.

My Penguin 3.0 page quickly plummeted.

I monitored it closely to ensure that my lost visibility wasn’t due to competitors jumping above me with new content or other factors. Nothing else but that CTR bot could have caused my ranking to slip so fast.

New Ranking Signals Create SEO Danger

But that wasn’t the only issue I discovered. I spent a lot of time analyzing the attacks on my clients’ sites. I found a few other new and serious ways negative SEOs could target a site. I will not list those here as I don’t want to pitch ideas to black hat SEOs, but if you want to be protected against those tactics, be sure to implement:

- Spotless onsite SEO

- No index bloats

- Monitor your new links

- Monitor closely all Google Webmaster Tools data

That is not only a negative SEO precaution, it is essential to monitor those factors on a weekly or monthly basis to maintain high positions in SERPs.

User Experience is a Strong Signal

Clearly, user experience is a strong signal. SearchMetrics’ 2014 Ranking Factors seem to confirm my findings. I recently talked with a Yandex engineer, who admitted that they don’t track links for commercial queries in Yandex now. A new website has to gain traffic from sources other than search engines to be considered for ranking in the Yandex algorithm.

Is Google going this way as well? Recent ranking freezes reported all over Black Hat forums seem to confirm similar patterns in Google. If so, then that exposes SEO to a new and discreet threat: Linkless Negative SEO.

Linkless Negative SEO

If what happened to my clients is any indication, here’s the future of negative SEO:

- Quiet

- Hidden

- Almost impossible to diagnose

- Based on a “bleed slowly” technique

- LEGAL

This is worrying. SEOs are caught between two pincers: Google and site saboteurs. On one side you have Google making it tough to rank by constantly redefining their algorithm. And on the other side, you have opportunists willing to exploit Google’s changes to damage and destroy their competition.

Linkless Negative SEO is attractive for six reasons:

- Simply shooting links at sites is predictable

- Affected sites can easily find bad backlinks

- People will quickly disavow them

- Attacked sites can track you down

- You can help them in the end (just ask the Dejan SEO guys)

- They work well combined with “old school” methods employed as decoys

Conclusion

After Penguin, SEOs became responsible not only for building links but also for protecting their clients from dangerous links. Now they will have to be even more vigilant. Linkless Negative SEO is dangerous and has the potential of becoming one of the worse things to happen to our industry.

Negative SEO used to be just firing up xRumer and shooting bad links to sites. It was a bad way of doing business, but it was easy to spot and clear how to combat it. I am still disavowing thousands of links per week for some of my customers.

Finding and disavowing bad links is time-consuming, but fixing all these user experience issues is much more complicated. Plus, it’s virtually undetectable. Unless you know what to look for, I believe that most people would never know what was happening.

In the face of this new threat, SEOs need to carefully watch out for Linkless Negative SEO if they are to survive and prosper online.