An apocalypse so inevitable, the Fourth Horseman stayed home.

Technology is evolving at an even faster pace and it’s affecting SEO in ways we couldn’t imagine a year ago – which is why, like it or not, SEO is getting more and more technical.

I believe that reading this post will be a huge eye-opener, showing how new technologies affect SEO more and more. It was hard to ignore two years ago, but now it is official. You can’t do SEO without getting technical. Love it or hate it, if you still aren’t sure, keep on reading. I will certainly change your mind in this article.

The First Horseman – Mobile-First

I don’t know about you, but I am sick of hearing about the Mobile-first index. It’s hard to find something that Cindy Krum hasn’t already covered in her amazing series of articles (make sure you bookmark and read part 1, part 2, and part 3).

However, if you look at Mobile-first from a technical SEO point of view, there are two things that are game-changers for SEOs – 1) CPU limitations and 2) internet connection.

- Mobile devices have massive CPU limitations compared to desktops.

According to my research, a lot of modern websites are ruthlessly KILLING mobile CPUs. Let me show you an example that will haunt your dreams.

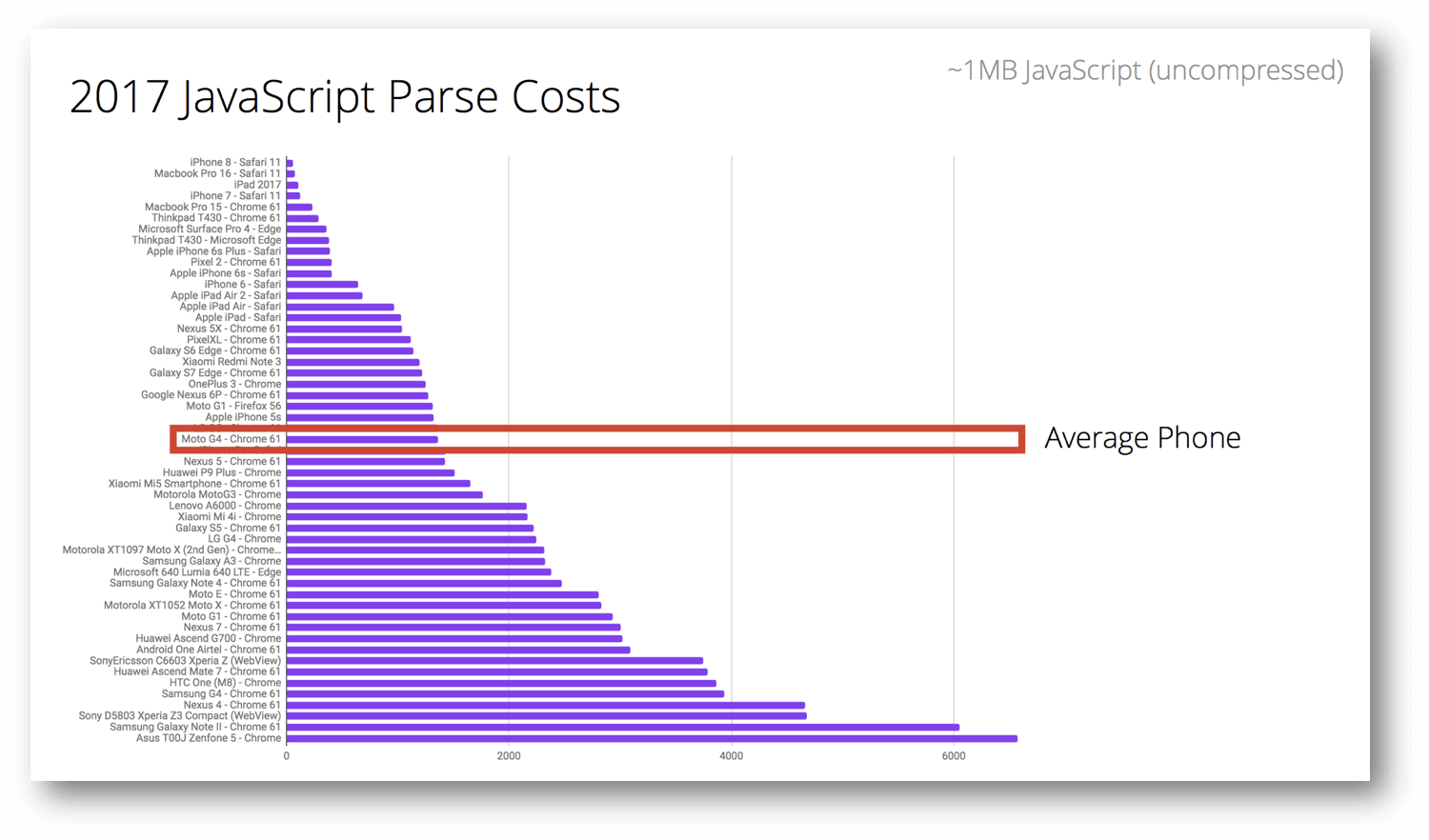

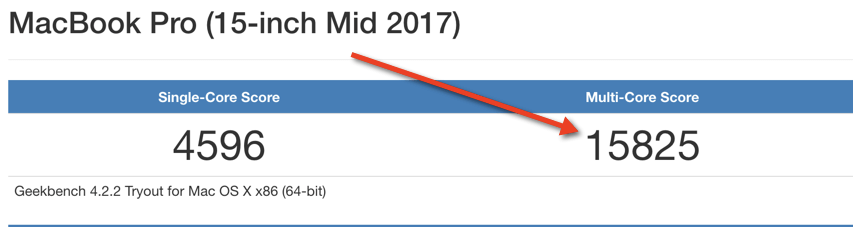

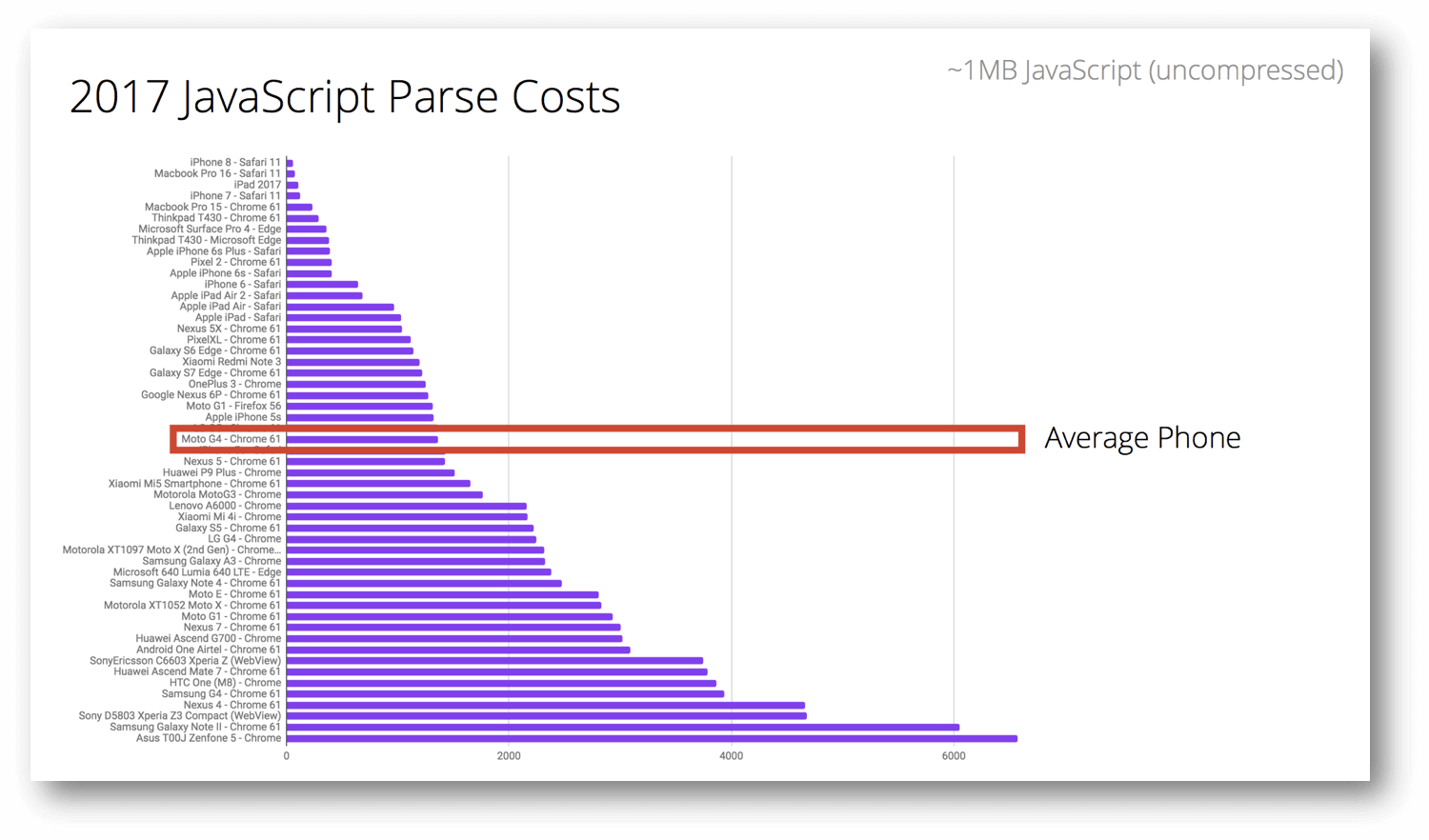

Addy Osmani of Google (super smart guy – make sure you follow his work) wrote a MUST READ article about JavaScript and CPUs. Addy showed how JavaScript parses the cost/time changes depending on your device and its CPU.

Before we continue, we need to remove an assumption a lot of developers and SEOs have when dealing with this subject: the average user is using a high-end mobile. The reality is, they are using something like a Moto G4 (median device).

Source: https://medium.com/dev-channel/the-cost-of-javascript-84009f51e99e

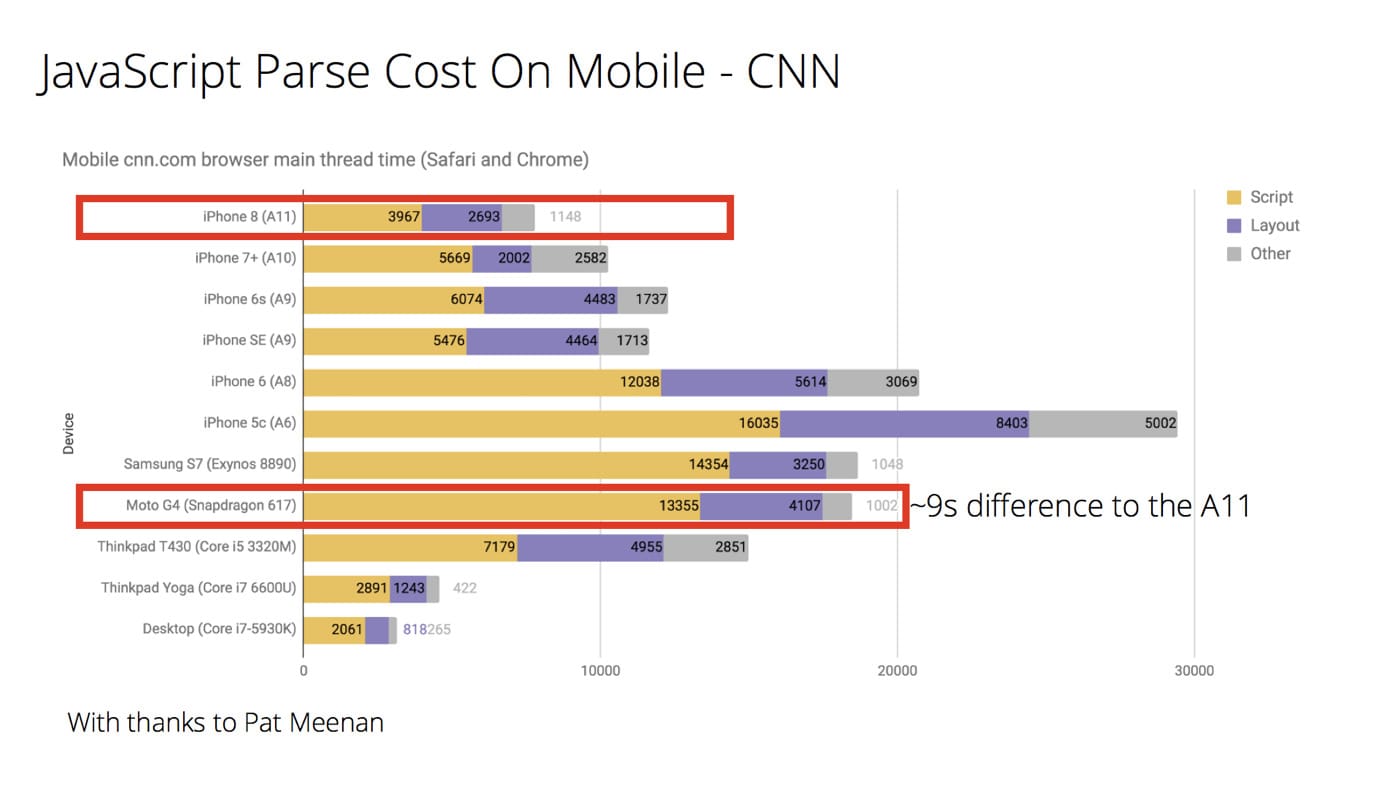

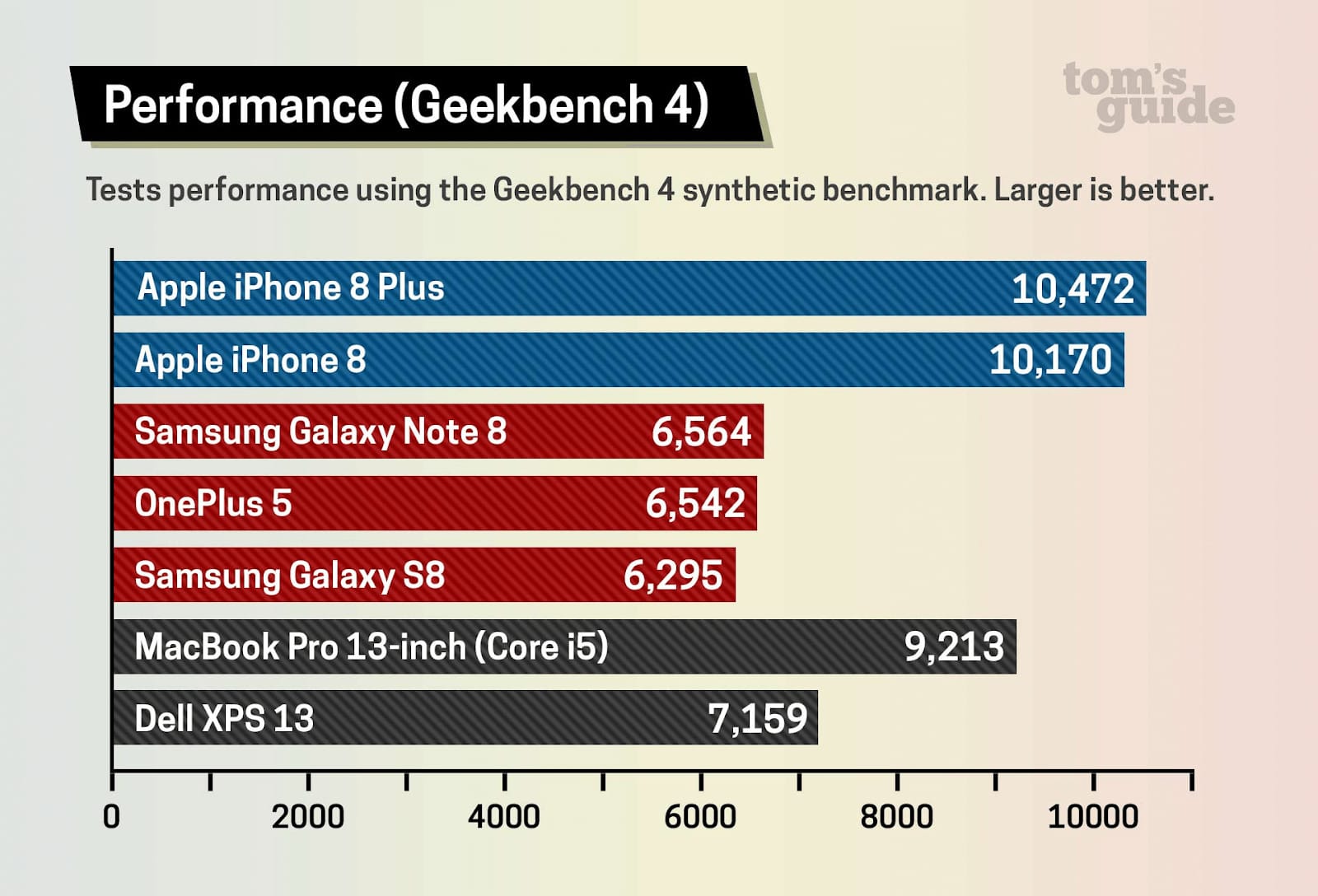

With that in mind, let’s take a website like CNN and compare the difference in JavaScript parse cost between a high-end mobile like an iPhone 8 and the median phone.

(source: https://medium.com/dev-channel/the-cost-of-javascript-84009f51e99e)

As you can see, there is a 9 second(!) difference in parsing the CNN website between an iPhone 8 and Moto G4. This discrepancy is extraordinary.

Let’s look at another example that I found even more fascinating.

BBC.COM

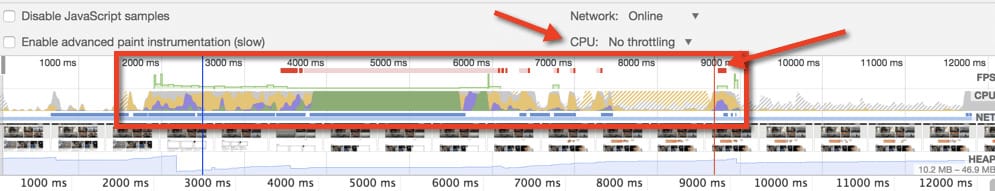

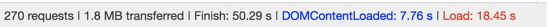

Using a 2017 15’ MacBook Pro with a top-of-the-line CPU, BBC.com loads fully within ~8-10 seconds.

However, at the same time, my CPU is getting hammered during those nine seconds.

How would this performance look on a low/mid-end device?

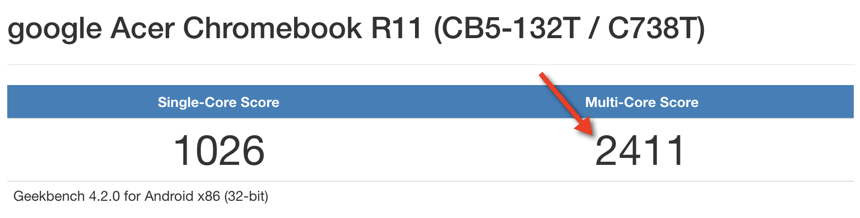

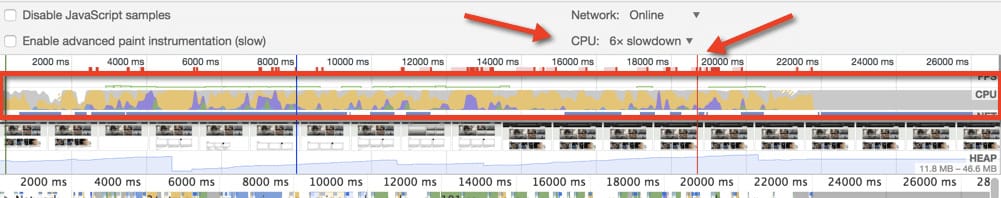

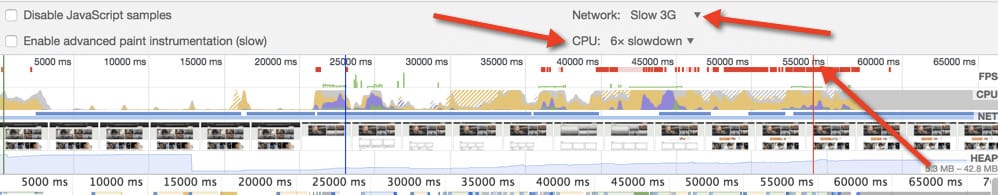

Emulating Addy’s research above with mobiles, I decided to take a look at how a low-level device would perform with BBC.com. My methodology was to find a lower-end device that is still popular on the market and is 6x slower (CPU-wise) than my MacBook.

Why? Most technical SEOs, developers, etc. use high-end computers and are more tech-savvy compared to the “average user.”

Here’s an example: this is my laptop’s CPU benchmark from GeekBench.

If we divide this number by 6, we get ~2640 Multi-Core Score. Now let’s find out if there are any modern machines that have this CPU performance.

For those of you who are not up-to-date with laptop tech – Chromebooks are laptops running on Android. They are fairly cheap but definitely on the lower end (tech-wise) of the spectrum. The fastest CPU among Chromebooks is still 3 times slower than the CPU on my Macbook (which is not the most powerful laptop on the market).

This idea is something new for SEOs and believe it or not, all of this is an SEO problem now.

Coming back to the average phone, it just so happens that Moto G4’s CPU performance is almost identical to the one in the Chromebook presented above.

Source: https://medium.com/dev-channel/the-cost-of-javascript-84009f51e99e

Let’s have a look at the simulated score for Google Chromebook vs. BBC.

As you can see, the throttled CPU was sweating from the first millisecond to push all the scripting through the pipes and its Load Time jumped to 20 seconds(!). At the same time, my throttled CPU was quite busy for almost one minute.

Interesting Fact

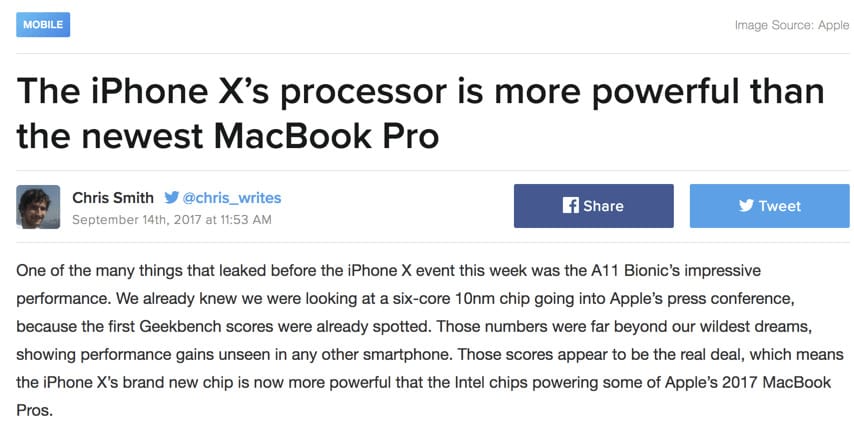

For reasons I can’t understand, iPhone X’s CPU is more powerful than a lot of laptops out there, including the latest 13-inch Macbook Pro. Unfortunately, the iPhone is definitely an exception and most phones are equipped with CPUs way less performant than a $1000 iPhone.

But we didn’t even touch on the internet connection, right? All this happens on a fast Wi-Fi connection. This brings me to the second point.

- 40% of mobile connections occur over 2G worldwide (yes, the 2G that makes it hard to do anything online).

2G would be rough, so let’s see what happens when we combine a slow CPU + a slow 3G connection.

BBC.com on a slow 3G + a throttled high-end CPU…

In all fairness “Load” is a terrible metric to look at. I am using it just to prove a point, to show you how bad CPU affects the whole process. For BBC, First Meaningful Paint (FMP) on a 3G + slow CPU occurred around the 25th second. My guess is that if you are willing to wait 25 seconds for the First Meaningful Paint (which you can’t interact with), you are either new to the internet or you are a zen master.

Mobile Friendly Test and JavaScript Rendering

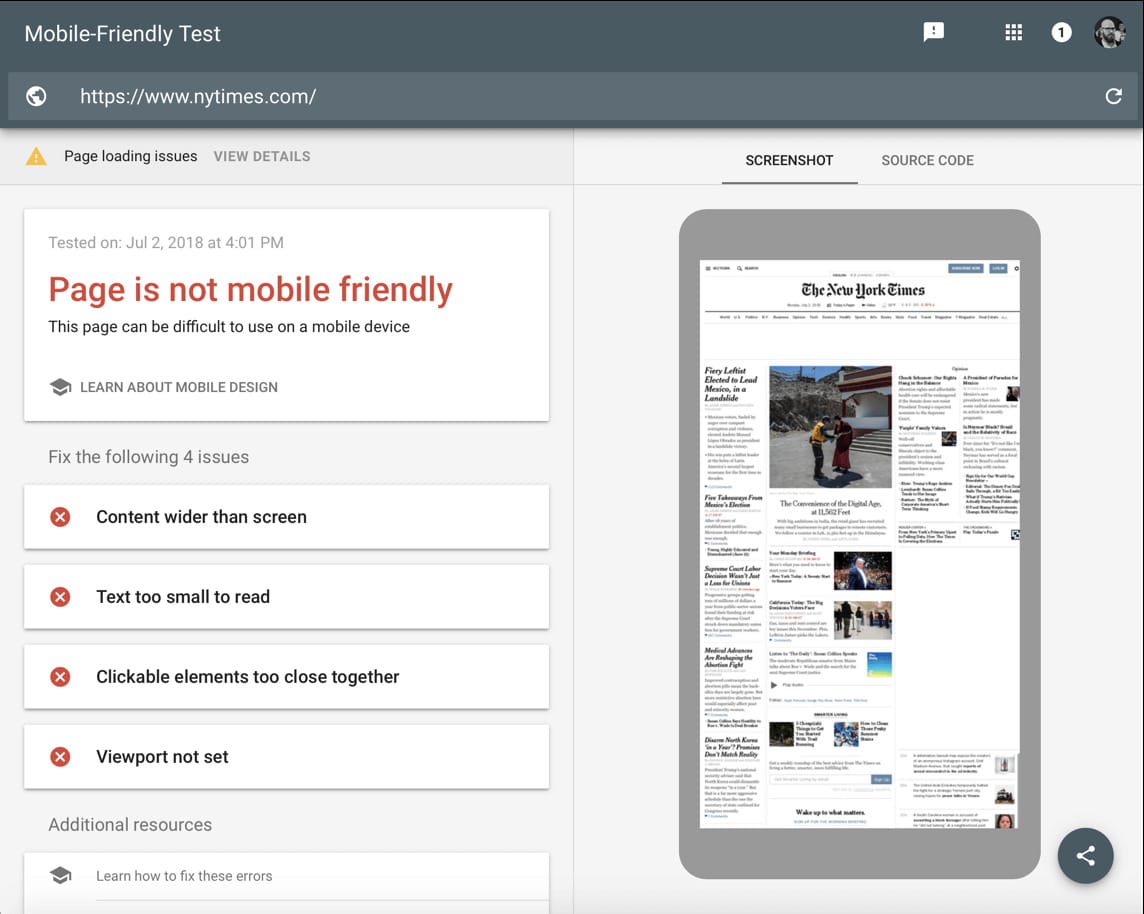

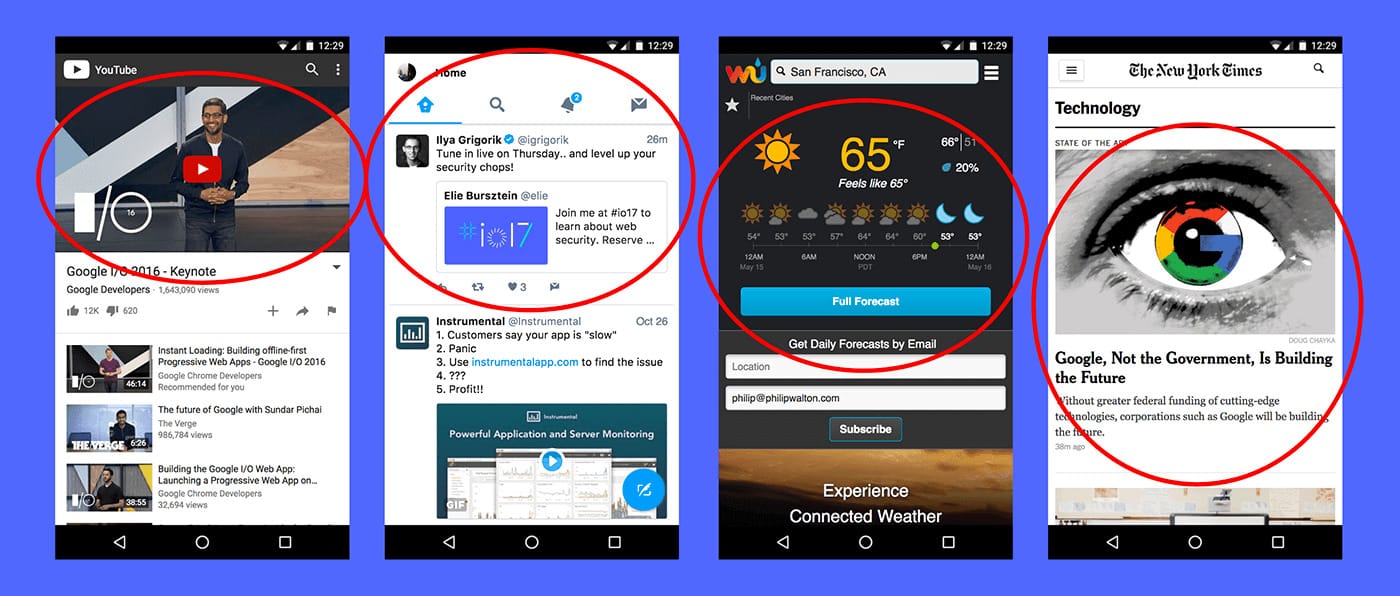

To add to the issues mentioned above, mobile-friendliness is a problem that many websites seem to struggle with even in 2018. Let’s take a look at the New York Times’ Mobile Friendliness.

Link: https://search.google.com/test/mobile-friendly?id=IQ6rIxWcVZF2AAs5WDxjWg

As you can see from the image above, the page isn’t mobile-friendly. This is the New York Times we are talking about!

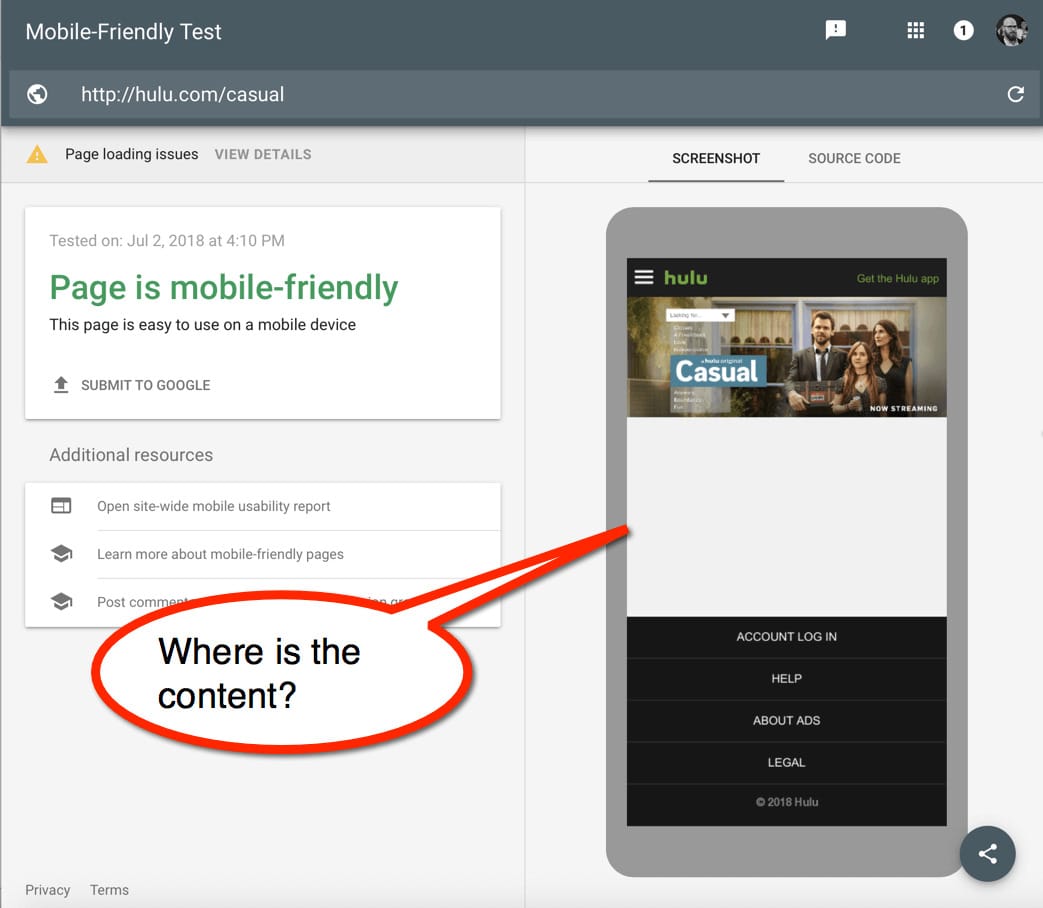

But mobile-friendliness isn’t the only struggle now. It’s become a lot more complicated with JavaScript powering so many modern websites. Let’s have a look at how Google sees some of the major websites.

Link: https://search.google.com/test/mobile-friendly?id=fckavKr0UWfVS7Ls_GKmew

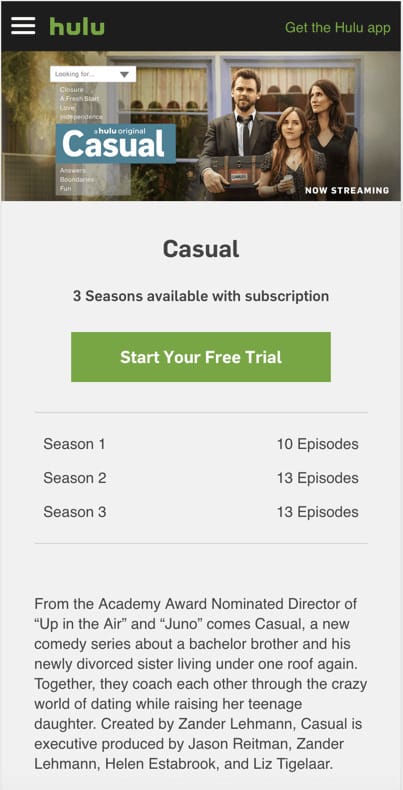

The screenshot above should look like the one below.

As you can see, JavaScript can make your website’s content completely disappear!

This brings us to our second horseman…

The Second Horseman – JavaScript

JavaScript isn’t one of those peripheral geeky topics anymore – if you are an SEO, JavaScript IS front and center, and IS your problem to deal with. And when it comes to mobile-friendly websites, many of us (myself included) were under the poor assumption that they had less JavaScript than desktop websites.

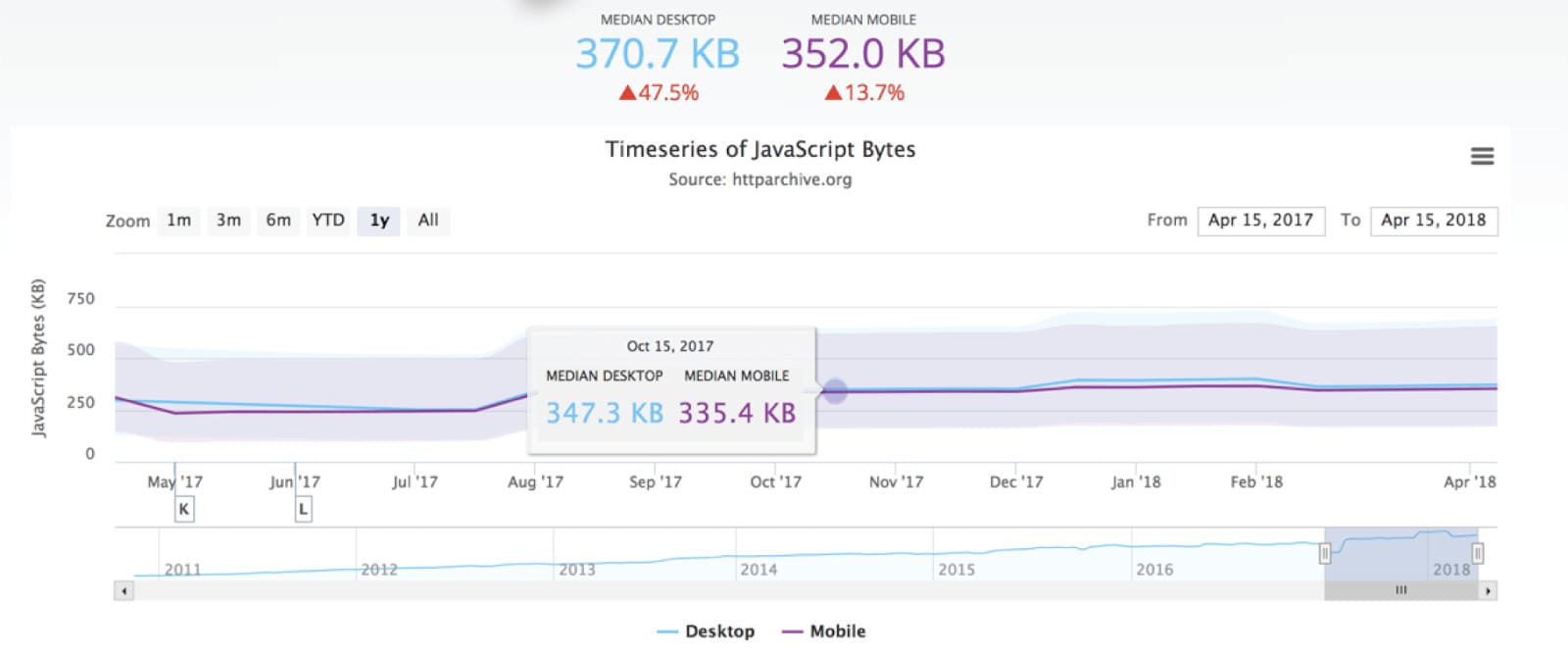

Let’s go to the HTTP archive to see the exact statistics.

Source: https://httparchive.org/reports/state-of-javascript

The difference between desktop and mobile is exactly 18.7 KB – a 5% difference. At the same time, some mobile devices are even less performant than the previously mentioned Chromebook or Moto G4. In fact, let me show you how much “damage” 200KB of JavaScript can do to your page.

JavaScript is one of the biggest performance offenders nowadays. With the exponential growth of JavaScript frameworks, we can be sure it’s not going to change anytime soon. Whoever is developing a website and wants it to meet the latest trends is put in the difficult position of having to decide between performance and all those bells and whistles delivered by client-side JS.

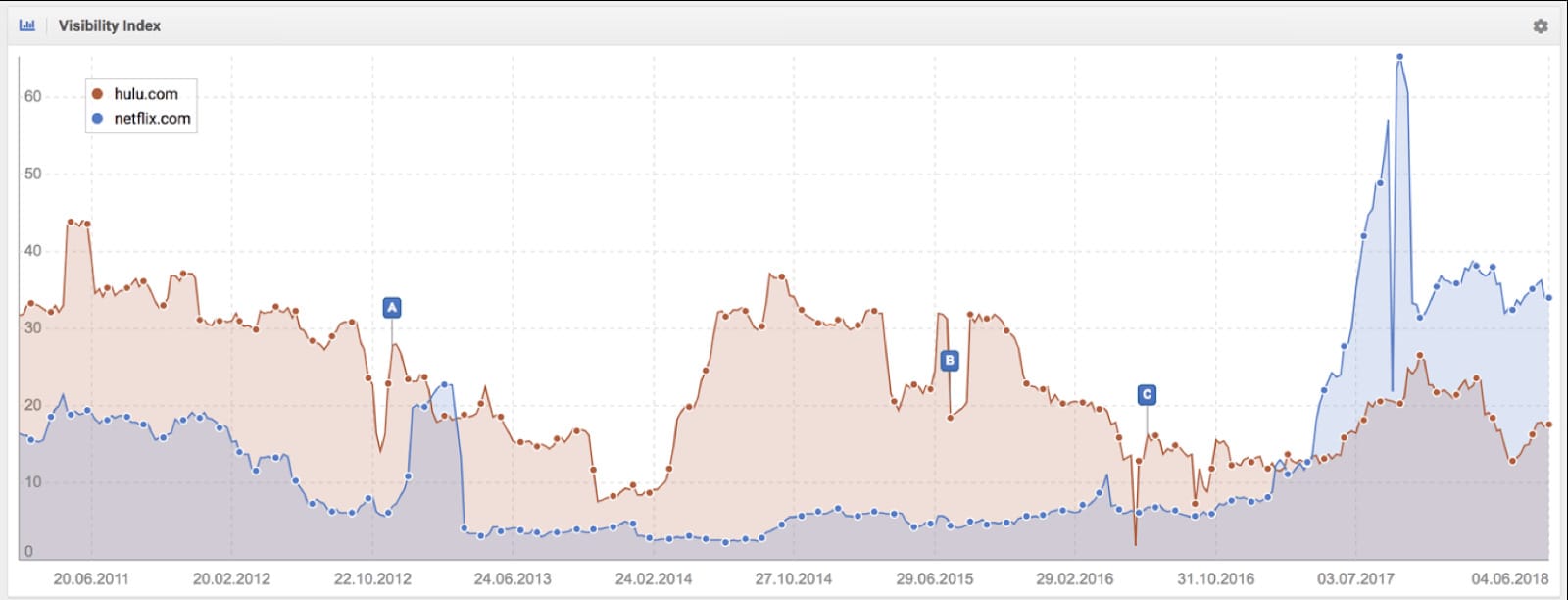

If that wasn’t enough, JavaScript comes with a huge SEO cost, potential ranking drops, indexing problems, etc. I won’t go through this in detail here, as Tomek from our team wrote a massive in-depth article explaining JavaScript SEO, but you can also see how bad JavaScript implementation can affect your visibility even if you are a big player, like Hulu.com.

All the problems mentioned above can make JavaScript look like something to avoid. I think it is important to state here that:

JAVASCRIPT IS GREAT, IF DONE WELL!

The problem is that finding developers who can do it really well seems to be a challenge. At least this is what we are hearing from most of our clients.

My advice, if you have a great team of developers who are up-to-date with the latest trends, but at the same time have a solid background and experience, keep them. There are websites out there that deliver a WOW user experience with JavaScript while remaining both performance and SEO-friendly (Netflix is a good example).

If you are not too sure about your dev team, I would avoid client-side JavaScript until you are 100% certain this won’t bring more harm than good, won’t kill your rankings or… won’t slow down your website on mobiles with a slower CPU.

This brings us to the third horseman…

The Third Horseman – Performance

Performance has been a ranking factor since 2010, but it was based on desktop traffic and crawls. It was fairly easy to measure and predict a website’s performance. As of July 2018 page speed is a ranking factor in mobile search.

This is complicated though as Google can’t measure mobile performance during crawls (due to the aforementioned factors like CPU performance or internet connection that varies among users). This is why they recently released CRuX (Chrome User Experience Report) showing real user data performance from Chrome, but more on this later.

This is quite a lot of data. The closer we look at performance on mobile devices, the more complicated it gets. After reading about the two horsemen, you already have an idea of some of the areas where it can get complex or even confusing. Now let me show you how it gets even worse from here.

How we understand performance in 2018 isn’t clear. Google and others have tried to decide on it, but there are a lot of technical difficulties to measure it on scale. One thing is sure, we are moving (or have already moved) to user-centric devices. Web development has evolved a lot over the last few years and using the “Load Time” metric is as last season as having “Kelly Family” posters on the wall.

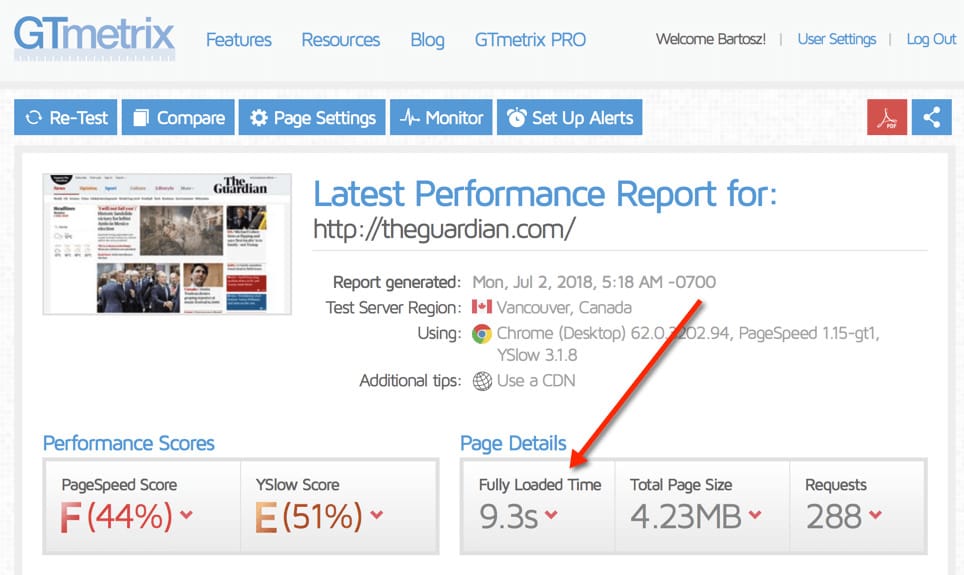

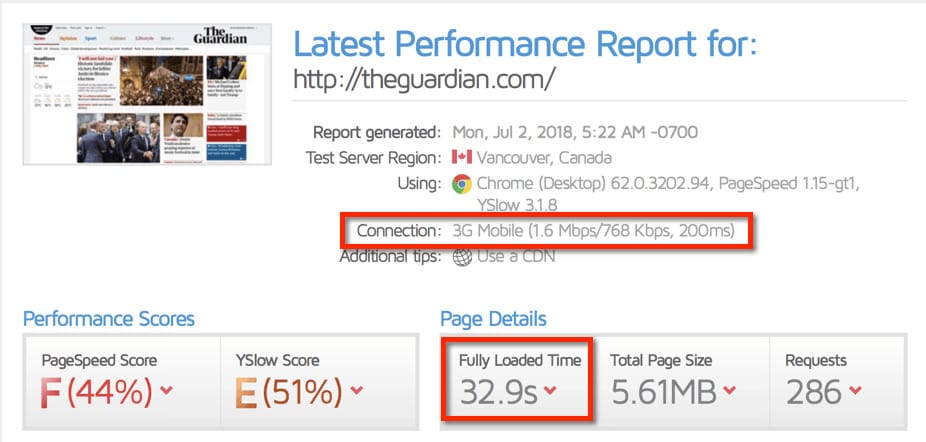

Let me give you a very interesting visual example using the popular newspaper website in the UK: The Guardian.

So how does such a high-ranking and popular website do? If you look at the screenshot above, The Guardian took 9.3 seconds to load on a desktop. This gets much worse once we change our connection speed to 3G.

I won’t even mention both the PageSpeed Score and YSlow Score being really bad as this is just the cherry on top. Now, the fun fact is that The Guardian is one of the fastest websites out there. Both The Guardian and Amazon are considered performance superstars and developers look at them in awe. This is one of the examples where both the webmasters and the tools focus on the completely wrong metric – one that often has nothing to do with user experience and REAL performance. This is also exactly when Fully Loaded Time turns out to be a terrible metric. If you open The Guardian on your mobile right now, it will load within a couple of seconds. Definitely a far cry from 30+ seconds.

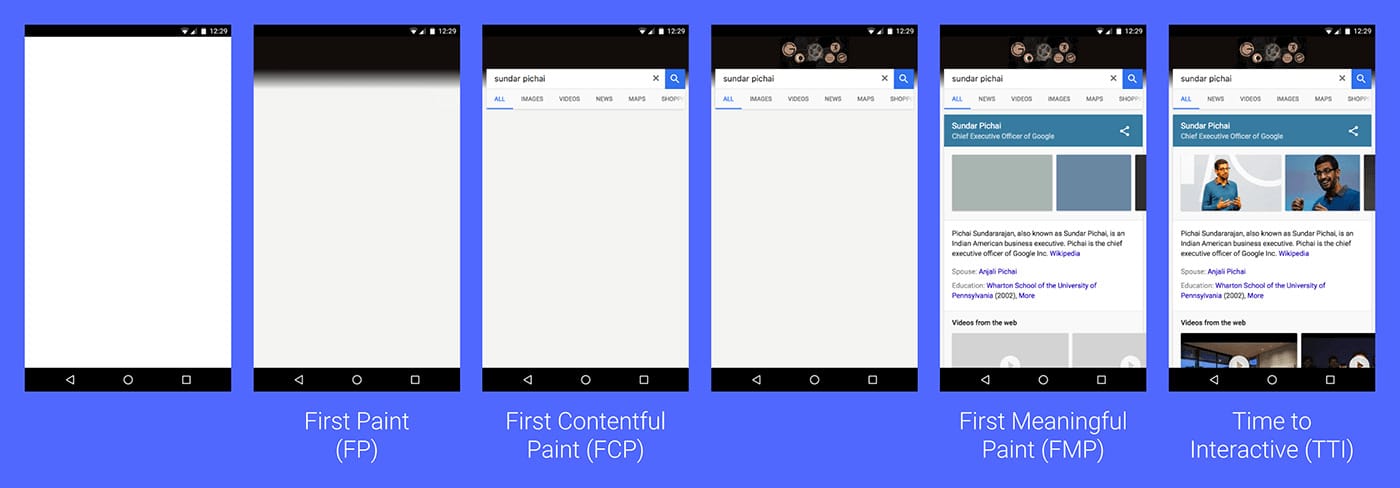

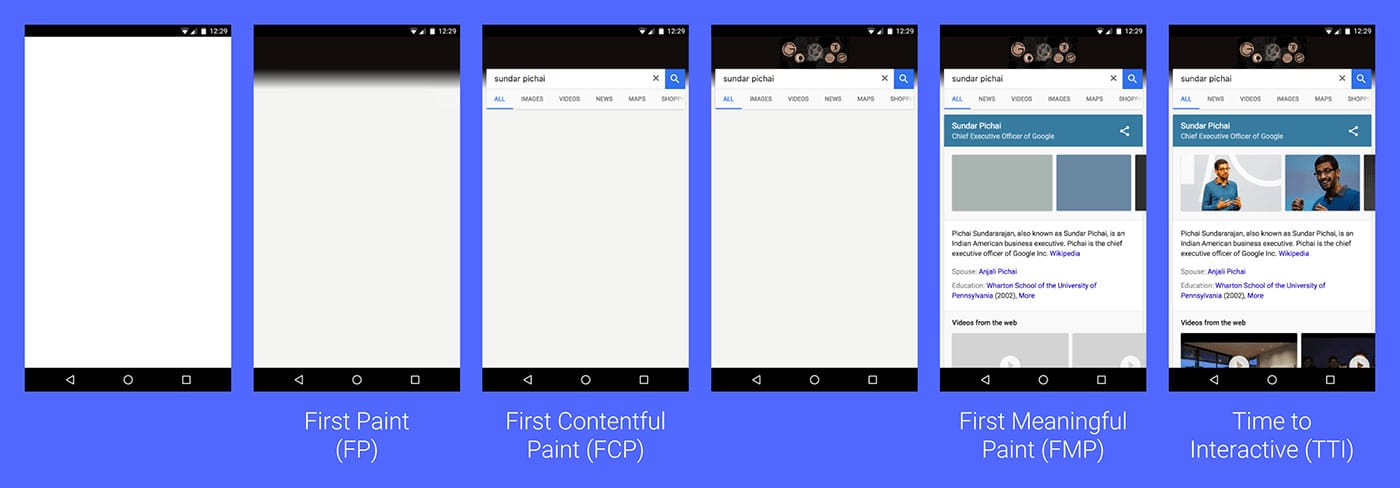

User-Centric Performance Metrics

User-centric performance metrics reflect REAL performance, meaning they measure how soon you saw the content you were looking for, or how soon you can interact with it. The Fully Loaded metric doesn’t reflect any of the things I mentioned above. This metric, still considered a key metric by so many performance tools, is also not listed on the screenshot below.

Source: https://web.dev/user-centric-performance-metrics/

Now, let’s dive deeper into the new metrics. Before we do that though, let me just mention that all of them depend HEAVILY on both JavaScript and Mobile Performance (CPU). It is also not a coincidence that we are looking at a mobile device screenshot on the image above.

First Meaningful Paint

Let’s start with my favorite metric – First Meaningful Paint. FMP measures how quickly you can see the “hero elements.” To give you an example of a hero element, if you are looking for weather, your hero element is the weather tomorrow. If you are looking for a definition of the word “sudorific”, your hero element is the definition of this word.

Source: https://web.dev/user-centric-performance-metrics/

Now, this seems like a great and easy definition, but it is currently impossible to track FMP on scale. Machines don’t know what the hero element is and therefore cannot track it.

Let me quote Google here:

“We don’t yet have a standardized definition for FMP (and thus no performance entry type either). This is in part because of how difficult it is to determine, in a generic way, what “meaningful” means for all pages.”

source: https://developers.google.com/

In my opinion, FMP is one of the key metrics to web performance today; however, I am fairly sure it won’t be one of the ranking factors soon.

Time to Interactive

Time to Interactive is the moment when the website becomes usable and interactive. For example, if you are on YouTube, this would be the moment when you can press play or change the volume. We can expect Google to track this metric pretty soon in Chrome.

Performance metrics used by Google as ranking factors

The two metrics above would make perfect ranking factors, unfortunately, they are currently impossible to track. However, I still cannot understand why Google decided to track two other metrics as determinants of website performance:

- DCL – DOM Content Loaded

- First Contentful Paint

The problem with both the metrics above is that they are completely irrelevant for users. DOM Content Loaded can be bad even for very fast websites (I’ll show you an example of this in a second). First Contentful Paint means that the content specified in the DOM is rendered. This could be an image, text, etc.

Source: https://web.dev/user-centric-performance-metrics/

Even Googlers admit that DCL isn’t a great metric.

“…traditional performance metrics like load time or DOMContentLoaded time are extremely unreliable since when they occur may or may not correspond to when the user thinks the app is loaded.”

source: https://developers.google.com/

Yet, DOM Content Loaded and First Contentful Paint are the only two metrics presented in Google’s Page Speed Insights which is a core tool for a lot of developers. I think it may shift the webmaster’s focus towards optimizing what they see rather than focusing on real user experience.

Let’s take a step back though and see how this would match with Mobile-First and JavaScript.

Looking at the big picture, getting a website to perform well is a huge challenge now. We need to carefully look at how we use JavaScript, Mobile Friendliness, and then all the performance metrics have to be in line if we do it well. This seems to be a huge challenge in 2018 and beyond, and I don’t think we all realize how complex it is.

Even the biggest websites out there seem to have a problem keeping up with the latest trends in SEO. Here are some examples of this…

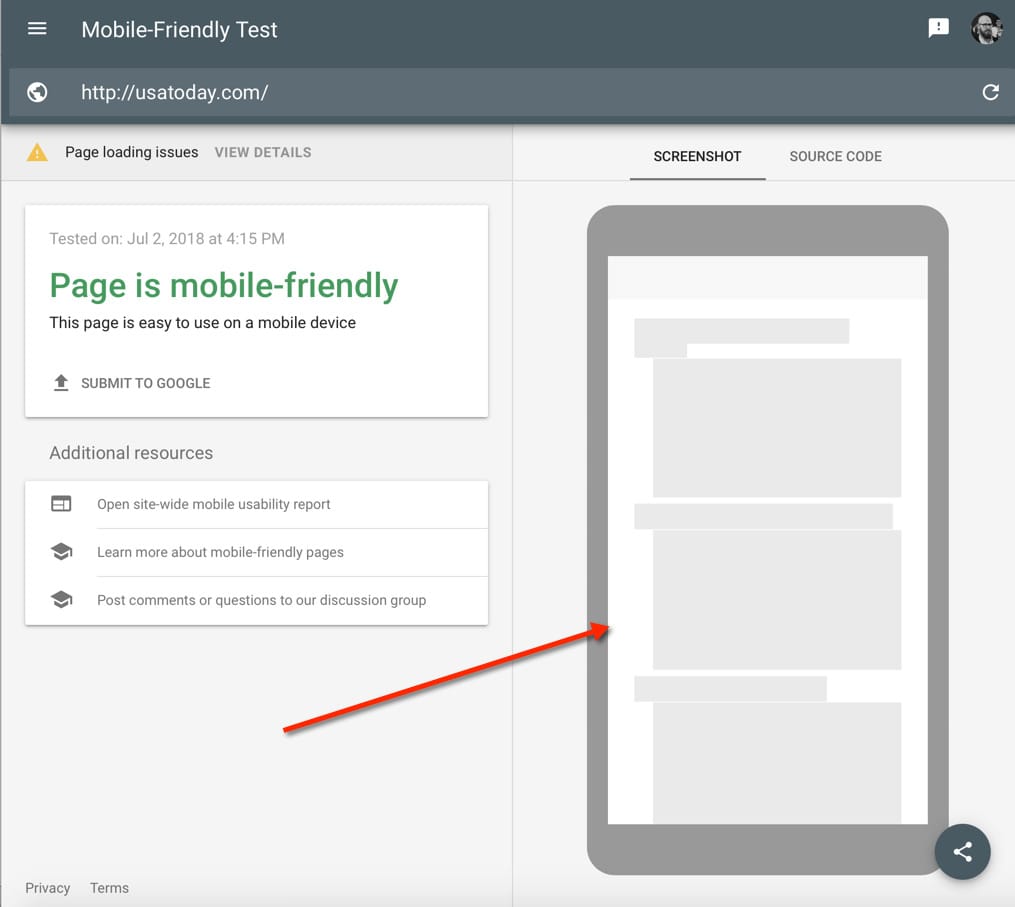

USA Today

As you can see above, USA Today seems to be invisible to Googlebot mobile. This may be a huge problem once they move to mobile-first!

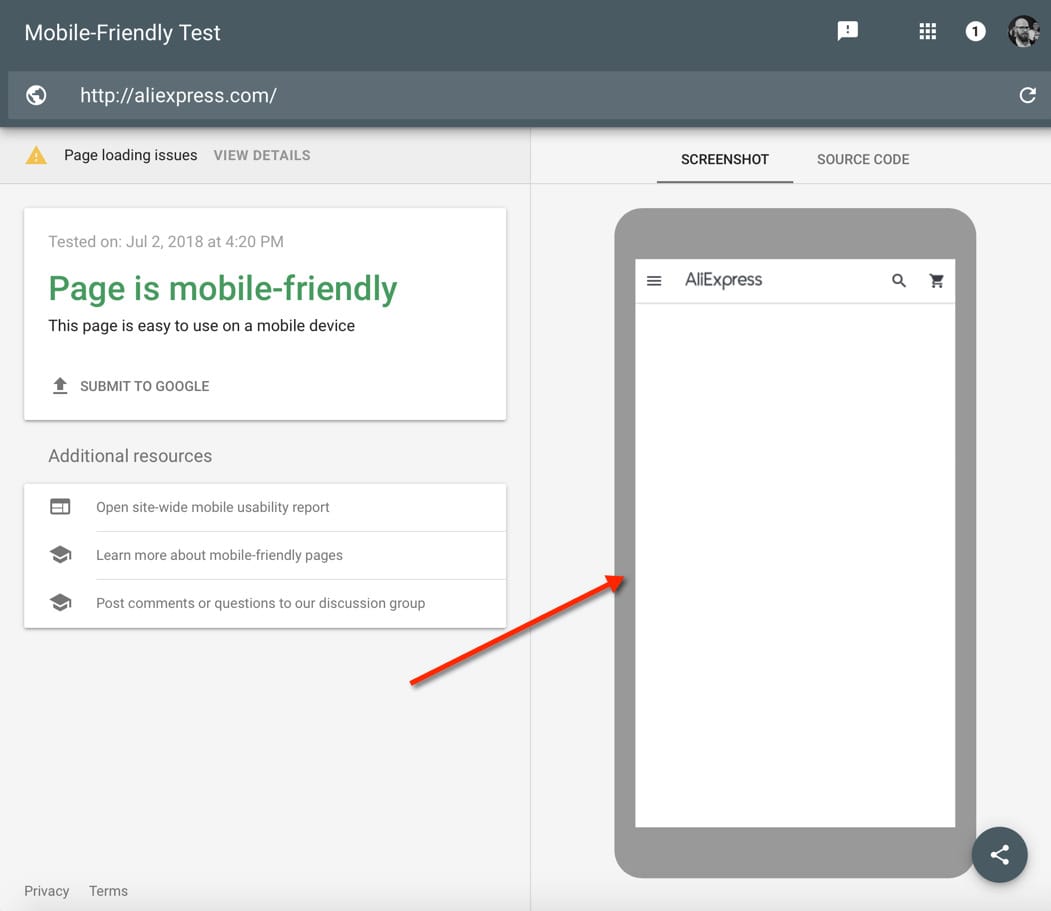

Aliexpress

Aliexpress is known for its thought leadership in the world of web development, therefore I expected much more from their dev team. This issue also reflects very badly on their rankings.

This problem is massive and spreading. In many cases, this can cause a partial or total de-indexing from Google.

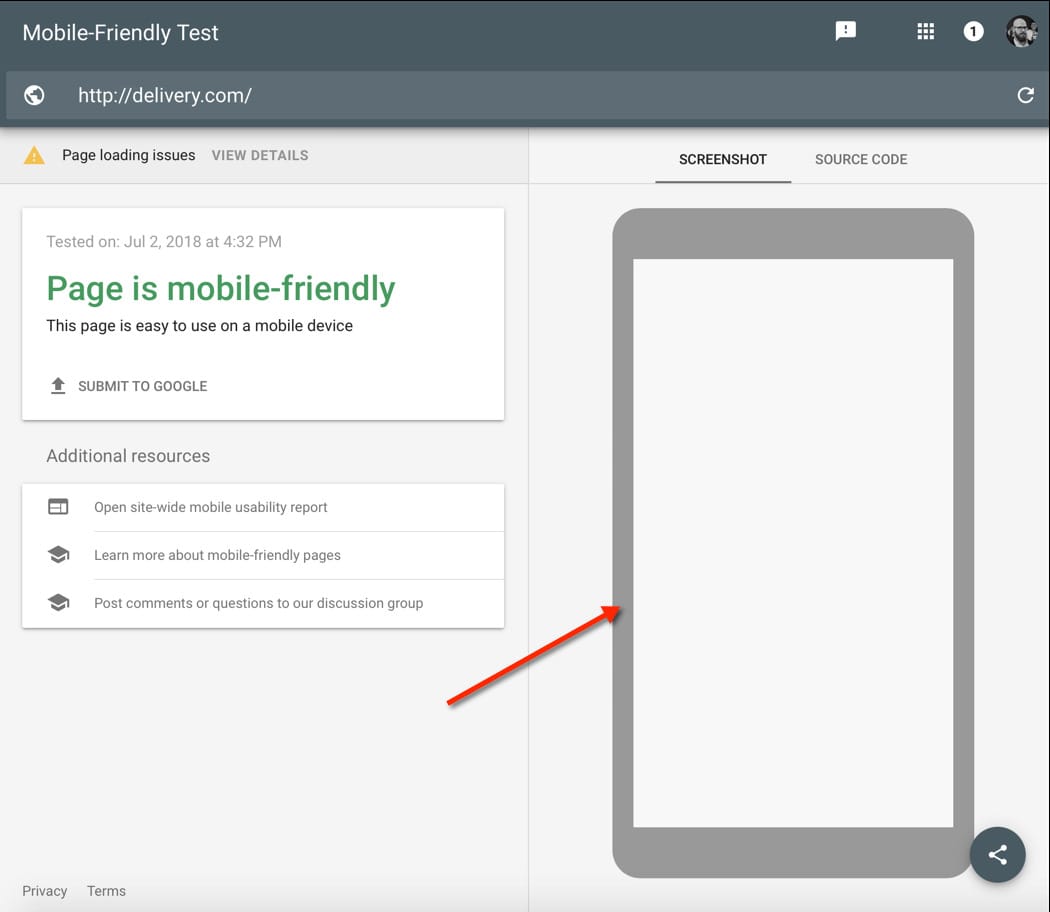

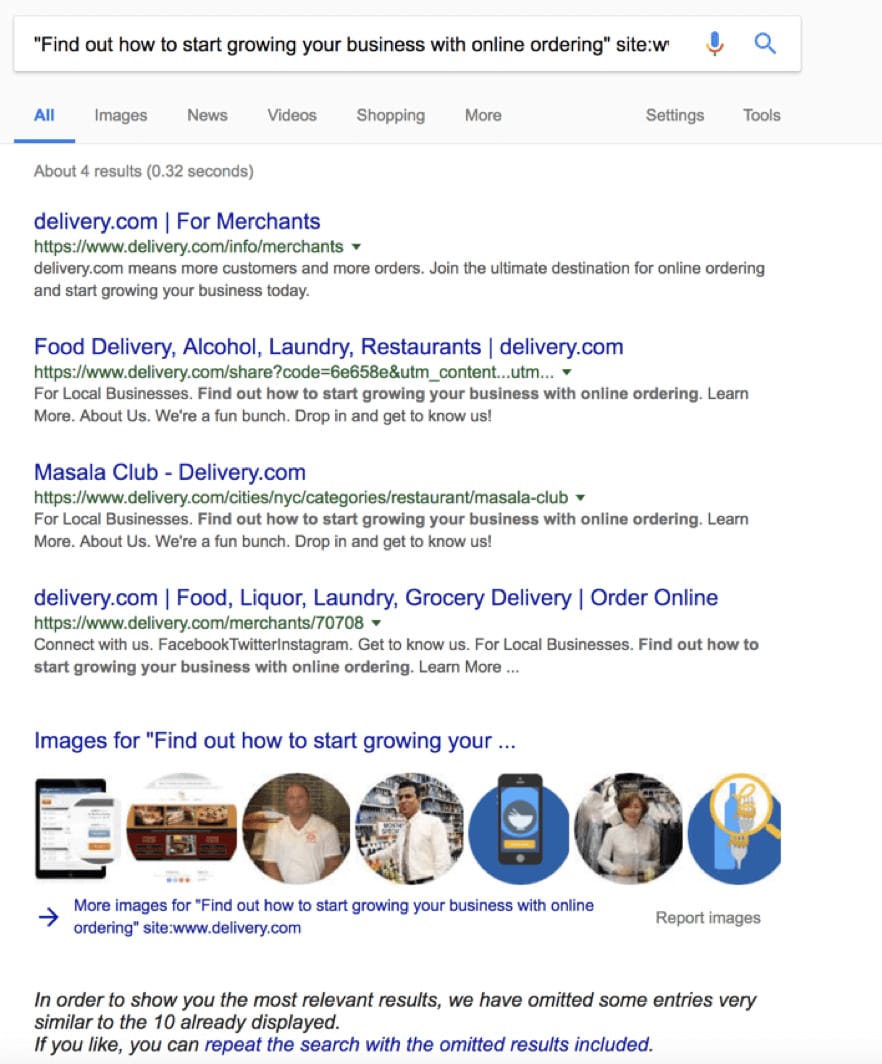

Delivery.com

Delivery.com is a perfect example.

If we copy any of the content from their homepage, we won’t find their homepage in Google.

I believe that mastering JavaScript SEO, understanding mobile-first, and making sure your performance is great are the three main factors to dominate (or stay included in) search engines going forward.

Let me show you one more example that is quite iconic and perfectly illustrates my point.

Netflix and Hulu were always fighting for SEO traffic. This has been going on for years. Hulu was dominating and Netflix was trying to get close to Hulu’s traffic.

It so happens that both Hulu and Netflix are early adopters of JavaScript. However, Hulu is now struggling with a lot of JavaScript issues (as I already mentioned), while Netflix is paving a new way of JavaScript performance and indexing best practices.

This is the result:

Netflix (blue) is dominating Hulu for the first time in years. It is one of the most interesting case studies of using JavaScript well, focusing on performance, indexing for browsers, and the user experience to dominate in SEO.

Netflix isn’t concerned about the Horsemen because they have already conquered them. They understand Mobile-First, they are thought leaders in the web dev community (especially React), and lastly, they have an enormous understanding of web performance.

Their case study of rewriting React to plain JavaScript to improve performance by 50% was viral in the community and was even picked up by Jake Archibald from Google. You can watch the full video and you can be sure that this is where SEO and Web Development are heading.

Summary

I remember talking about JavaScript SEO two years ago and back then even I wasn’t 100% sure if this would be the “thing” in the future. Now I am amazed at how JavaScript, performance, and mobile have made their way into the mainstream.

The technology is growing at an amazing pace and I am 100% sure that this speed is overwhelming for Google too. I have a lot of examples that would prove it, but this is a topic for another lengthy article.

My point here is that we don’t control the pace of the changes anymore. Neither does Google. Web developers, small players, huge players… everyone is trying to ride the latest technologies and they are becoming more and more complex.

On the other hand, I’m surprised by how calm SEOs are these days in the face of the apocalypse. It amazes me how the biggest websites worldwide can lose 60-70% of their traffic and not react for 6 months or longer.

I hope that this article will be an eye-opener for a lot of SEOs, especially those who work with all those big players. Maybe they don’t realize that most of these issues aren’t that hard to diagnose and fix, as long as you understand how web technology is changing and how to work with, rather than fight against, the three horsemen of the apocalypse.

And if you need someone to deal with the horsemen of the apocalypse on your website, you can use Onely’s technical SEO services.

Hi! I’m Bartosz, founder and Head of SEO @ Onely. Thank you for trusting us with your valuable time and I hope that you found the answers to your questions in this blogpost.

In case you are still wondering how to exactly move forward with fixing your website Technical SEO – check out our services page and schedule a free discovery call where we will do all the heavylifting for you.

Hope to talk to you soon!